Table of contents

How to make a process map: A step-by-step guide

Understand process mapping basics.

Let's kick things off with a simple truth: even the most straightforward processes can benefit from a bit of visual clarity.

Think about something as routine as making a sandwich. Easy, right? But break it down into steps, and you might discover more efficient ways to get it done—like gathering all your ingredients at once instead of making multiple trips to the pantry.

That's where process mapping shines. It's a visual tool that helps you see your current processes and uncover areas for optimization. Whether you're streamlining workflows, identifying bottlenecks, or enhancing team collaboration, a clear process map can be a game-changer for your product team.

A process map provides a detailed visual representation of each step involved in a workflow, making it easier to understand, analyze, and improve the process. By visualizing the flow of tasks, decision points, and interactions, you can quickly spot inefficiencies and areas for improvement. This can lead to significant gains in productivity and efficiency, ensuring that your team operates at its best.

Define the scope and objectives of your process map

Before diving into creating your process map, it's crucial to determine the scope and objectives. This sets the foundation for a successful mapping session.

Identify the process

Start by pinpointing the specific process you want to map. It could be anything from a user journey to an internal workflow.

Consider processes that significantly impact your team's productivity or those that frequently encounter issues. These are prime candidates for process mapping. By focusing on high-impact processes, you can ensure that your efforts will yield meaningful improvements. Gather input from your team to identify pain points and areas that could benefit from a more structured approach.

Set clear goals

What do you hope to achieve? Are you looking to identify inefficiencies, improve collaboration, or streamline operations? Clear objectives will guide your mapping efforts.

Define specific, measurable goals for your process mapping project. For example, you could reduce the time required to complete a task by 20%, eliminate unnecessary steps, or improve communication between departments. Having well-defined goals will help you stay focused and measure the success of your process improvements. Communicate these goals to your team to ensure everyone is aligned and understands the purpose of the process mapping exercise.

Gather your team

Process mapping is a collaborative activity. Bring together stakeholders from different departments to ensure you have a comprehensive view of the process.

Assemble a diverse team of people directly involved in or affected by the process you're mapping. This might include product managers, designers, engineers, and other key stakeholders. Each team member brings a unique perspective, crucial for creating an accurate and effective process map. Encourage open communication and collaboration to ensure all viewpoints are considered. This collaborative approach enhances the quality of your process map and fosters a sense of ownership and commitment among team members.

Collect necessary data

Gather all relevant information and documents related to the process. This might include existing workflows, performance data, and feedback from team members.

Before you begin mapping:

Conduct a thorough analysis of the current process.

Review any existing documentation, performance metrics, and feedback from team members.

Identify areas where the process breaks down or where improvements are needed.

Collecting comprehensive data will provide a solid foundation for your process map and help you make informed decisions. Use surveys, interviews, and observation to gather insights from those directly involved in the process. This data-driven approach ensures that your process map is based on real-world conditions and challenges.

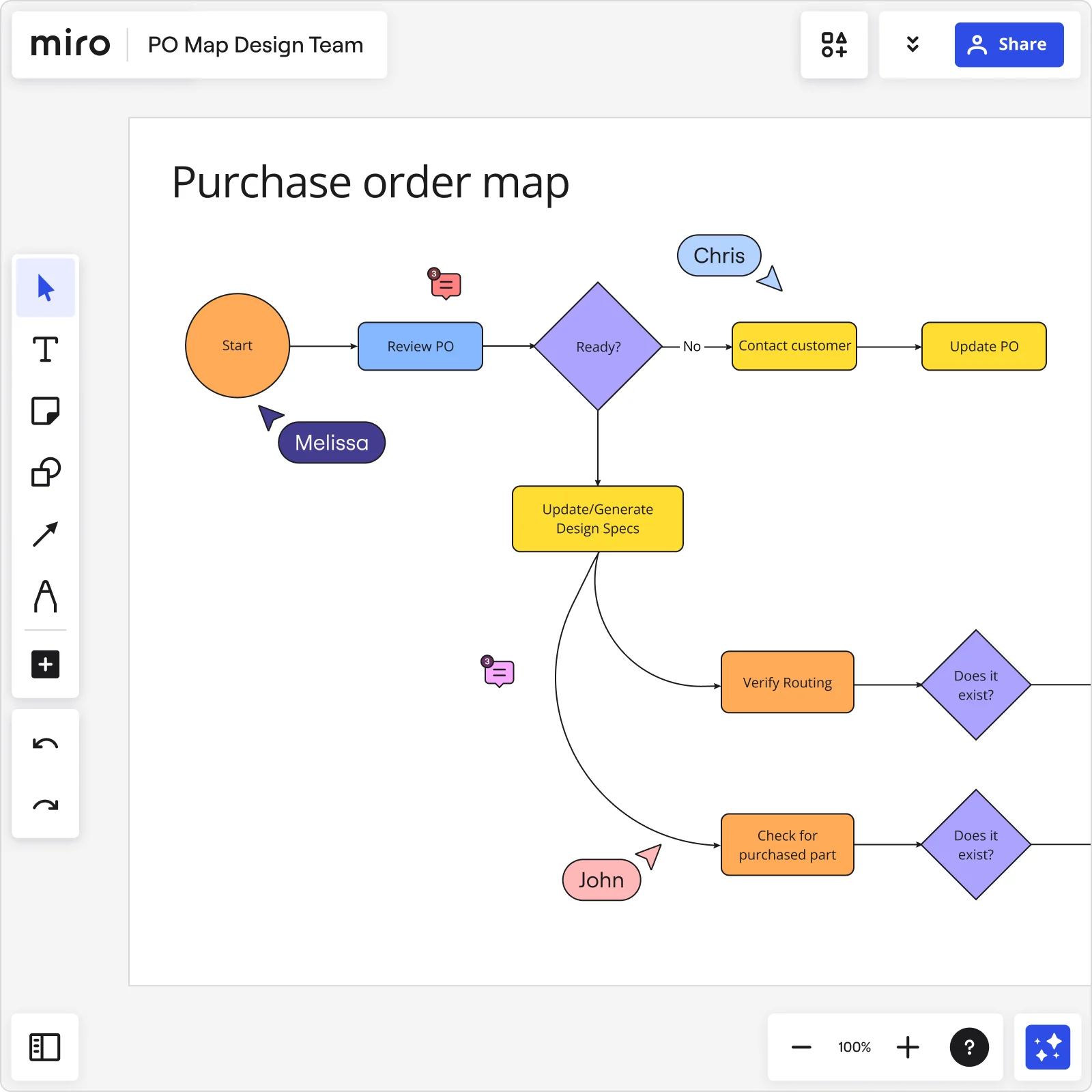

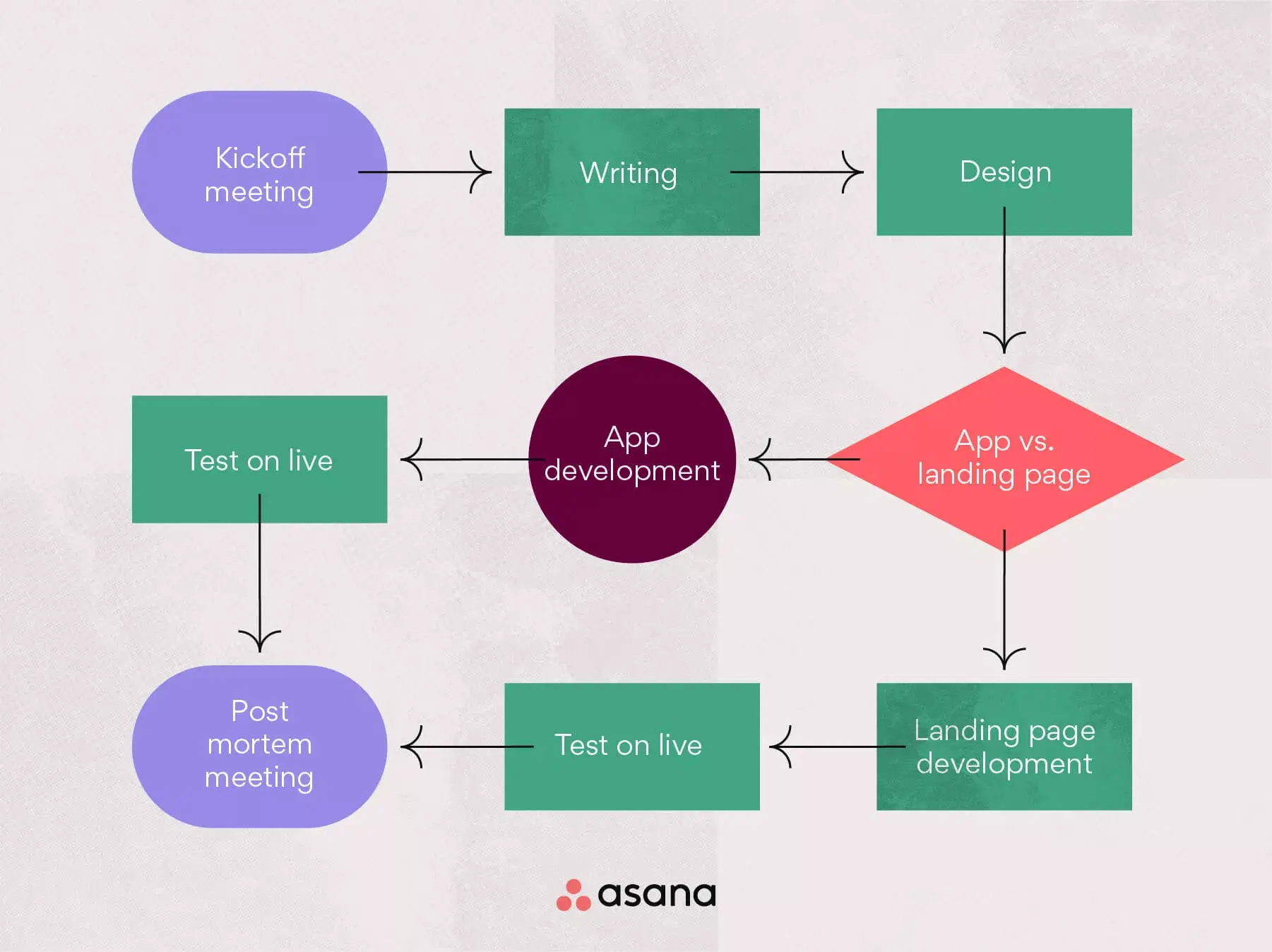

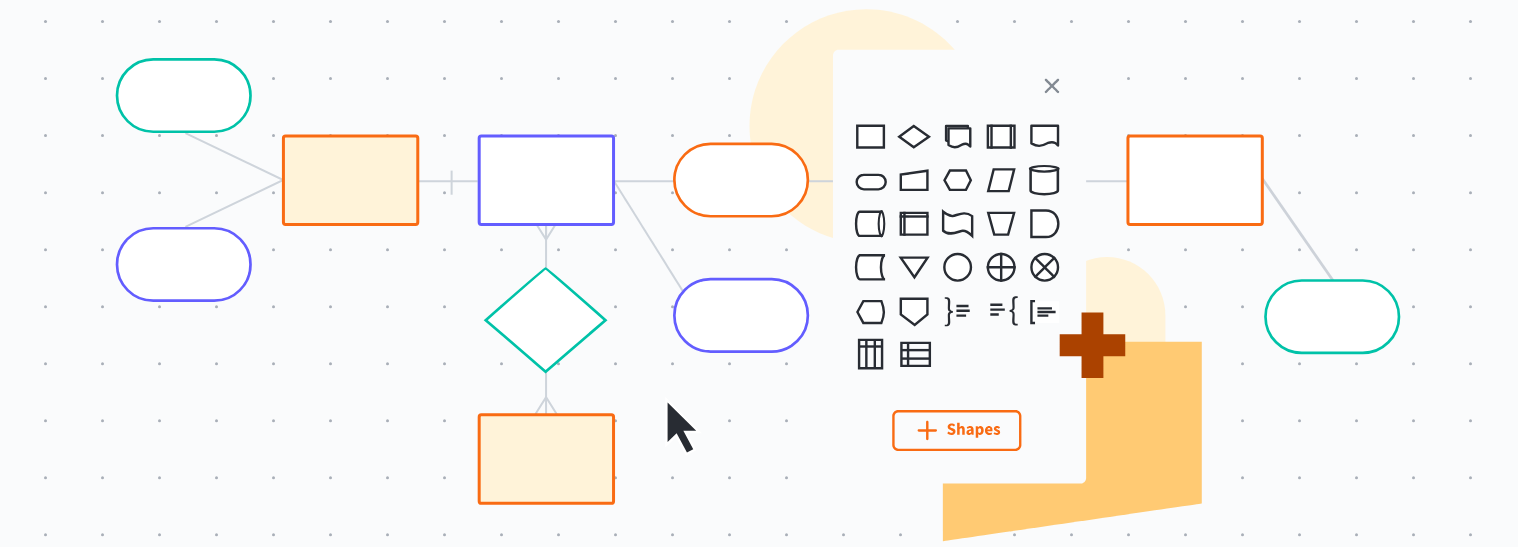

Process mapping step by step in Miro

Creating a process map in Miro is straightforward, thanks to our robust visual workspace for innovation. Here's how to get started:

1. Define your process steps

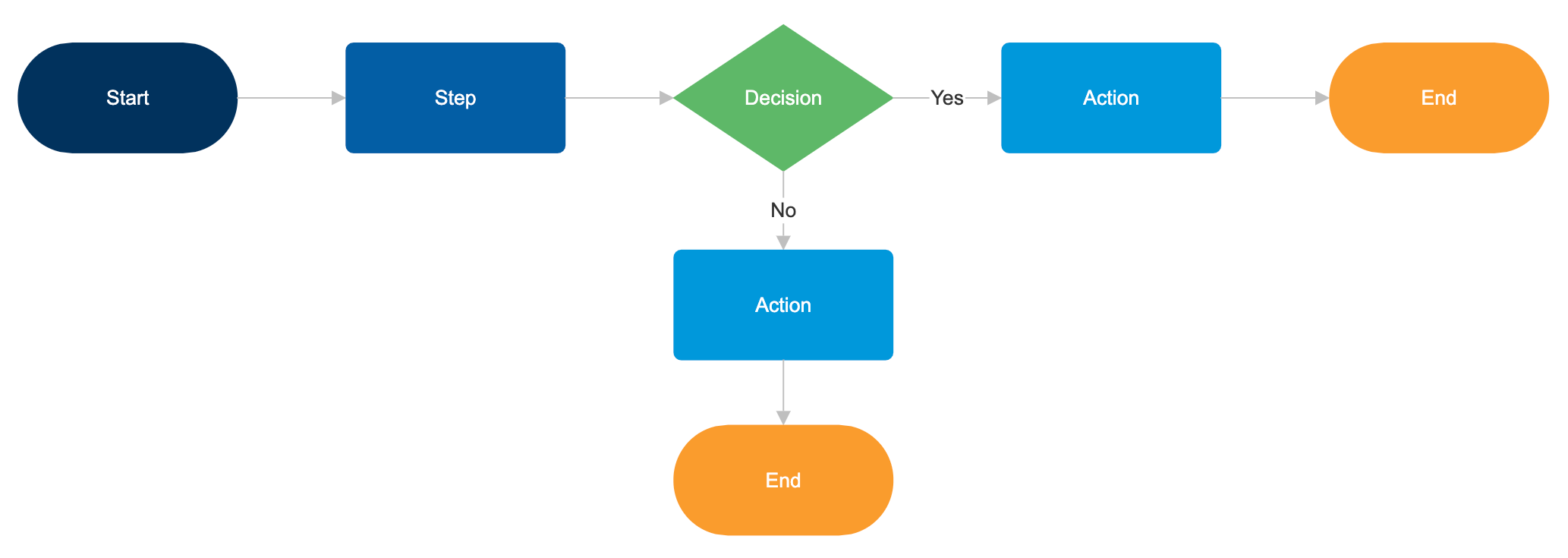

List out each step involved in your process. Use Miro's advanced diagramming shapes pack to add clarity and detail. For instance, use rectangles for tasks, diamonds for decision points, and arrows to indicate flow.

Break down the process into individual steps, focusing on tasks, decision points, and interactions. Use clear, descriptive labels for each step to ensure everyone understands the process.

Miro's advanced diagramming shapes pack provides a broad selection of options for creating detailed diagrams:

Rectangles can be used to represent tasks.

Diamonds indicate decision points.

Arrows show the flow of the process, ensuring logical connections between each step.

2. Map out decision points

Complex decision points can be challenging, so it is important to clearly delineate paths and potential outcomes. This ensures that everyone understands the potential directions a process can take based on different variables.

Decision points are critical junctures in your process where different paths may be taken based on specific criteria. Clearly mapping out these points is essential for understanding how decisions impact the overall process.

Use Miro's advanced diagramming capabilities to create detailed decision trees, showing all possible outcomes and their implications. This helps identify potential bottlenecks and ensures that everyone understands the decision-making process.

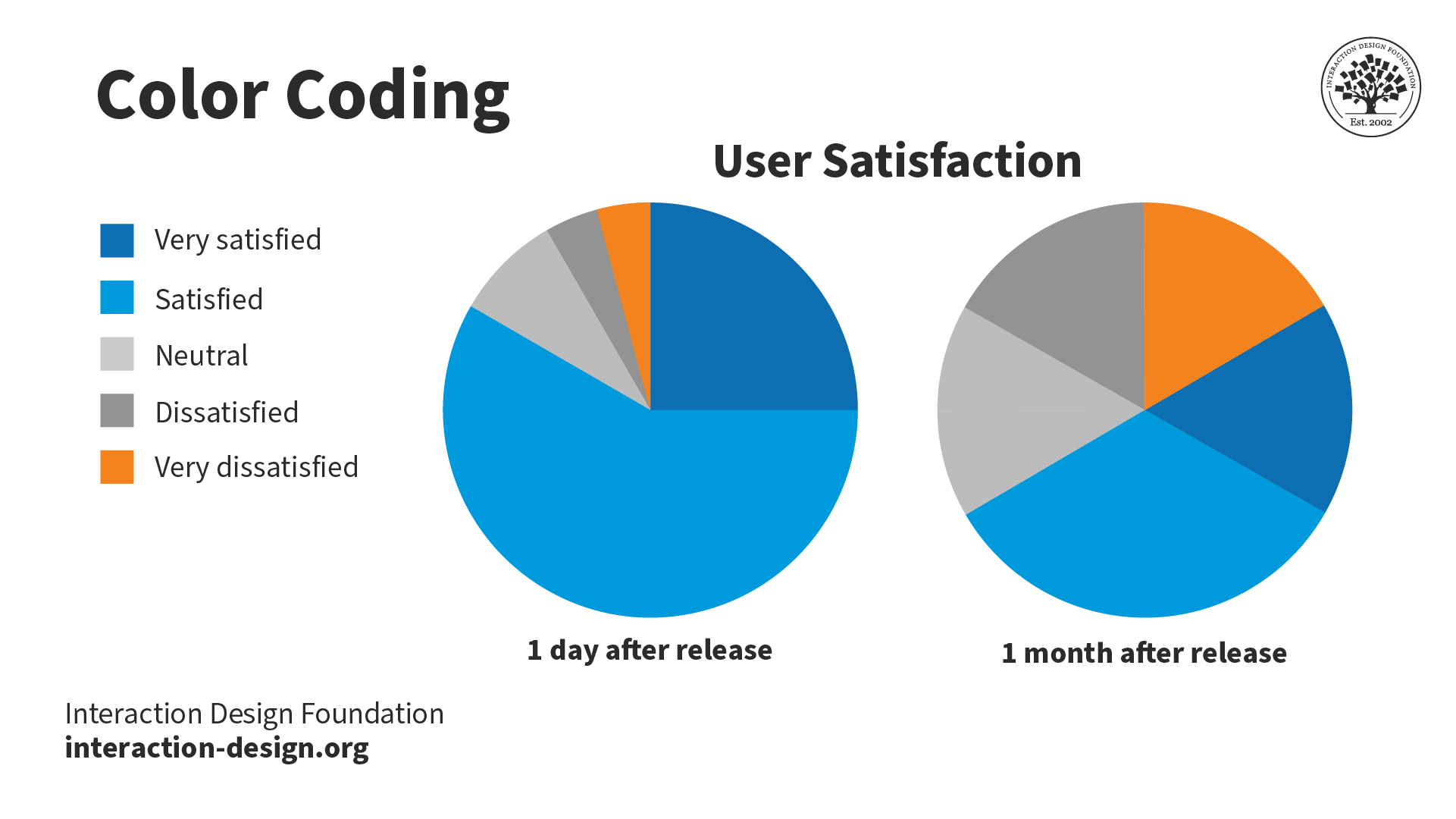

Incorporate color-coding or other visual cues to differentiate between various paths and outcomes, making the map more intuitive and easier to follow.

3. Identify bottlenecks and inefficiencies

Look for areas where tasks are repeated, issues escalate, or single points of failure exist.

Once you have mapped out the process, review it carefully to identify bottlenecks and inefficiencies. These are points in the process where tasks are delayed, repeated, or where issues tend to escalate.

Use Miro's visual workspace to highlight these areas, making them easy to spot. Miro AI can assist by analyzing your map and suggesting optimizations. For example, it might identify steps that can be combined or streamlined or highlight areas where automation could improve efficiency. By addressing these bottlenecks, you can significantly enhance the overall performance of your process.

4. Integrate feedback seamlessly

Collaboration is key. Invite your team to review the process map directly on your Miro board.

Effective process mapping requires input from all stakeholders. Use Miro's collaboration features to invite your team to review the process map. They can add comments, suggest changes, and provide feedback in real time. This ensures that all perspectives are considered and that the final process map reflects the collective knowledge and experience of the team.

5. Refine and finalize your map

Incorporate the feedback and make necessary adjustments. After gathering feedback, it's time to refine and finalize your process map. Incorporate the suggestions and make any necessary adjustments.

Miro's advanced diagramming capabilities make this process straightforward. You can easily shift parts of the process, add new steps, or remove obsolete ones. This flexibility ensures that your process map remains accurate and up-to-date. Use version control features to track changes and maintain a history of revisions. Once finalized, your process map should provide a clear, comprehensive view of the workflow, ready to guide your team toward greater efficiency and effectiveness.

6. Save and share

Once your process map is complete, save it and share it with your team.

Miro's cloud-based platform ensures that everyone on your team has access to the latest version, no matter where they are. Use the sharing options to distribute the map to all relevant stakeholders. For easy distribution, you can also export the map in various formats, such as PDF or image files. Regularly review and update the map to reflect any changes or improvements. By keeping the process map accessible and up-to-date, you ensure that it remains a valuable tool for ongoing process management and optimization.

What's next? Implement and monitor the process

Your process map is more than just a diagram—it's a roadmap for success. Here's how to make the most of it:

1. Implement changes

Begin by prioritizing the changes that will have the most significant impact. Assign responsibilities to team members and set clear timelines for implementation. Use Miro to track progress and document any challenges or adjustments needed. Regular updates to the process map ensure that it remains a living document, reflecting the current state of the process. This ongoing documentation helps maintain alignment and provides a clear record of improvements and changes.

2. Monitor performance

Establish key performance indicators (KPIs) to measure the effectiveness of the implemented changes. Regularly review these metrics to assess the impact on efficiency, productivity, and overall performance. This continuous monitoring helps ensure that the process remains optimized and that any emerging issues are promptly addressed.

3. Scale and adapt

Growth often brings new challenges and opportunities. Add new steps, integrate new tools, and adjust workflows to accommodate changing requirements. Miro's flexible platform ensures that your process maps can grow with your organization, providing a scalable solution for ongoing process management. Regularly revisit and refine your process maps to ensure they continue to meet the needs of your team and organization.

4. Foster continuous collaboration

Effective process management is an ongoing effort that requires continuous collaboration. Encourage your team to regularly review the process map and provide feedback. Miro's collaboration features make it easy for team members to share their insights and contribute to the process map. This continuous feedback loop ensures the process map remains dynamic and relevant, reflecting the latest insights and improvements. Foster a culture of continuous improvement by regularly discussing process enhancements in team meetings and making collaboration a core part of your process management strategy.

Map processes with confidence

Process mapping is a powerful way to visualize and improve workflows, identify bottlenecks, and enhance collaboration within your product team. Creating and maintaining process maps becomes a breeze with Miro's advanced diagramming capabilities and seamless integration features.

Ready to transform your processes? Explore Miro's visual workspace for innovation and take your process mapping to the next level. Happy mapping!

What is process mapping?

Process mapping, production workflow template, process mapping examples, join our 70m+ users today, plans and pricing.

7 Process Visualization Use Cases: Your Complete Manual

Key takeaways

- Process visualizations simplifies understanding and communicating complex workflows.

- Process visualization diagrams cater to different analytical needs and use cases.

- Effective application of visuals can streamline operations and facilitate team collaboration.

Process visualization is an essential tool that empowers you to understand complex processes within an organization.

By transforming text and numbers into visual diagrams, it provides a clear and immediate understanding of workflows, identifying bottlenecks, inefficiencies, or opportunities for improvement.

Whether you’re planning a new project or refining existing operations, using visuals can simplify the task at hand and make communication more effective across teams.

Visualizations come in various forms, each serving specific functions in the process analysis . Flowcharts, swimlane diagrams, and Gantt charts are just a few examples of how intricate processes can be represented visually, making them accessible and actionable.

These diagrams can serve multiple use cases, such as streamlining operations, enhancing team collaboration, or facilitating decision-making processes. With the right visualization techniques, your ability to oversee and improve processes is significantly enhanced.

What is Process Visualization?

When you’re looking to streamline your workflow or improve your operations, process visualization can be a powerful tool. It turns complex data and activities into visual formats that are easier to understand and manage.

Definition of Process Visualization

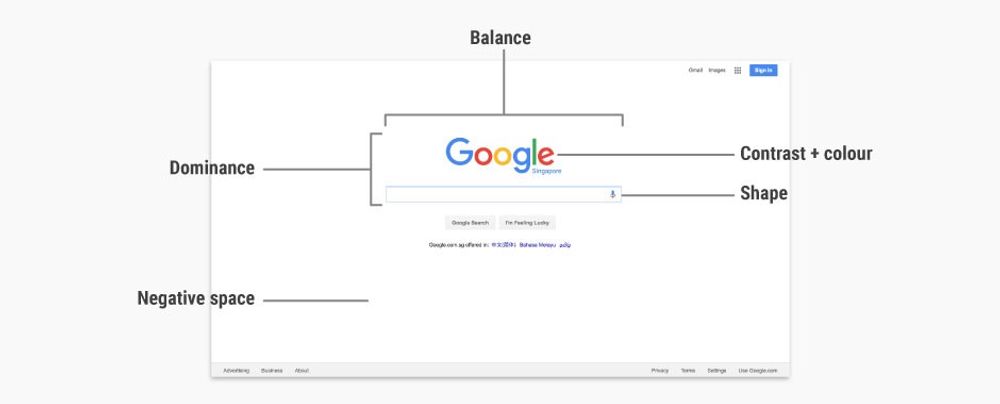

Process visualization is the graphical representation of a process or system’s workflow. It’s a technique that transforms your activities, systems, data, and operations into visual elements like diagrams and charts.

By doing so, it allows you to grasp complex processes quickly and identify areas in need of improvement.

Process maps are visual representations of the stuff your company does. A process map can represent software development, product manufacturing, IT infrastructure, employee onboarding, and more. Source: Lucidchart

Importance of Visualization in Processes

Visualization is key to improving communication and decision-making within your business.

A clear visualization aids in bridging language barriers, simplifies training, and ensures compliance with standards.

It also helps stakeholders to align on the workflow and objectives, fostering a better understanding of the full customer journey and internal processes.

Types of Process Visualizations

Various types of process visualizations are utilized depending on the specific needs and aspects of your operations:

- Flowcharts: A basic form of visualization that outlines the sequence of steps in a process.

- Process Maps: Offer a more detailed view, often including roles, decision points, and interactions.

- Customer Journey Maps: Visualize the experience and actions of a customer interacting with your company.

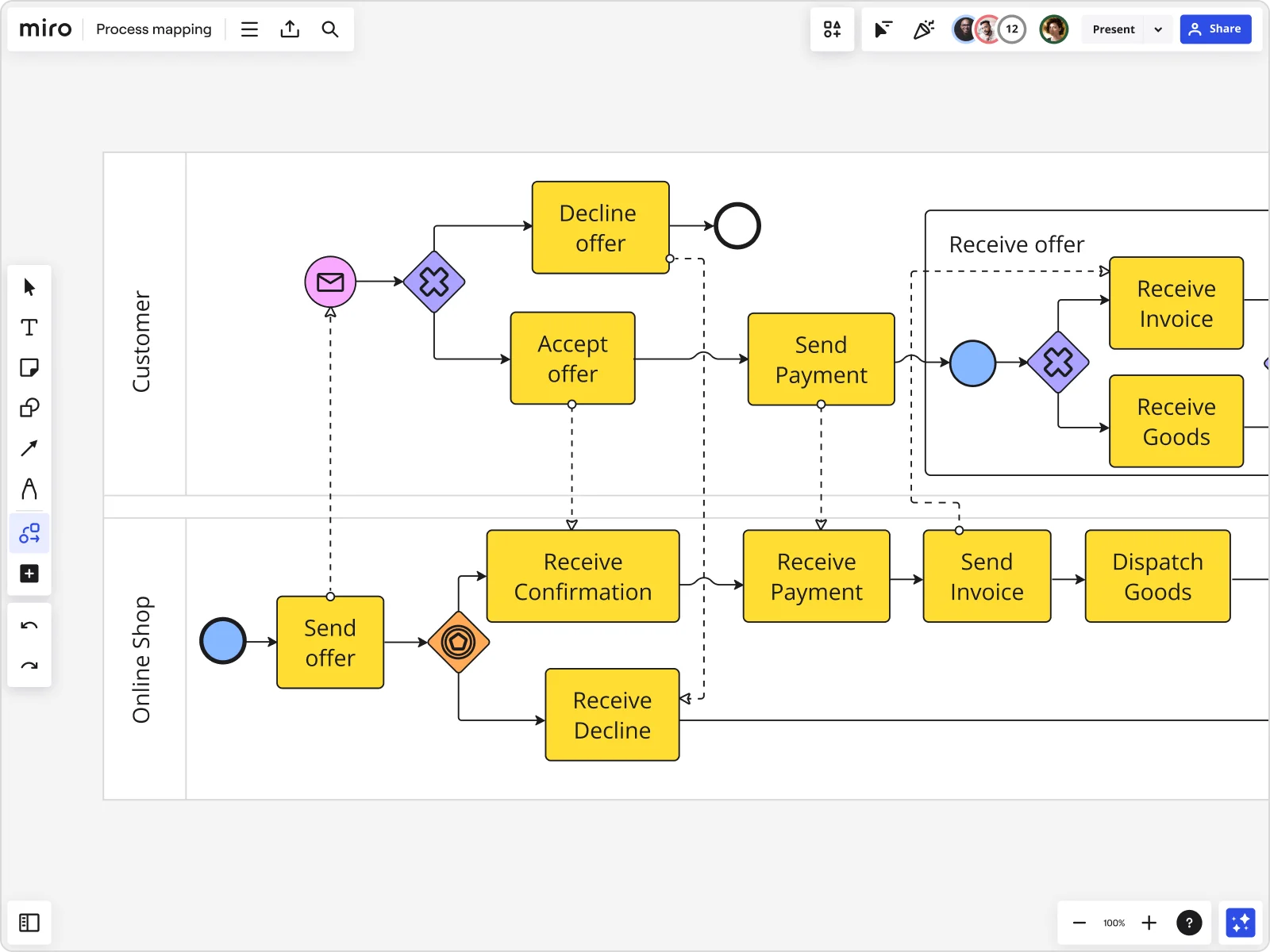

- BPMN Diagrams : Business Process Model and Notation diagrams that provide a standardized method for modeling.

- UML Diagrams: Universal Modeling Language diagrams used primarily in software development.

- Swimlane Diagrams: Diagrams that assign each process step to the person or team responsible.

- Gantt Charts: Useful in project management to display the timing of tasks.

- SIPOC Diagrams: Show Suppliers, Inputs, Process, Outputs, and Customers for a process.

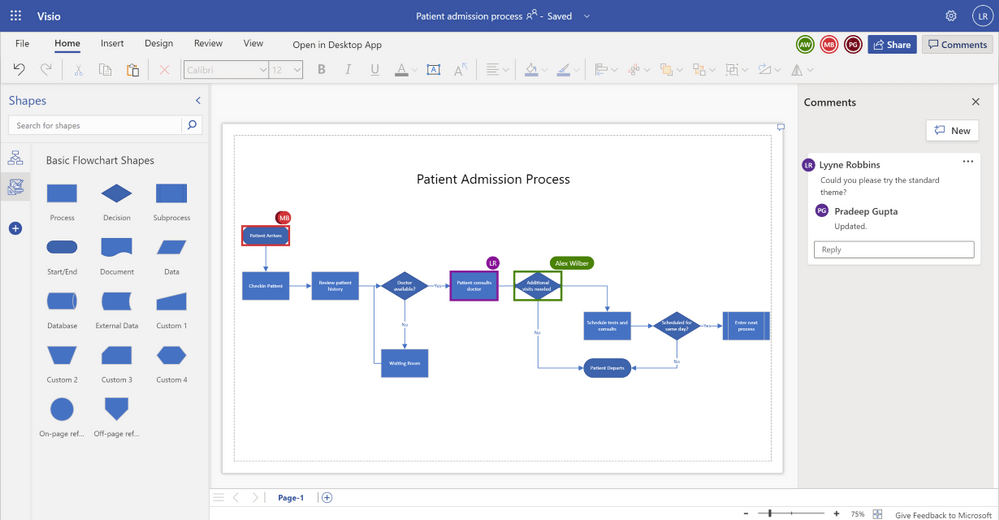

Example of a flowchart in Microsoft Visio

Comparing Diagrams and Charts

Choosing the right visualization often involves a comparison of different diagram types. For instance:

- Flowcharts vs. Process Maps : While both display the workflow, process maps provide deeper insights into the details and interactions between different steps.

- Gantt Charts vs. Swimlane Diagrams : Gantt charts excel in showing project timelines, while swimlane diagrams are better for clarifying responsibilities.

Deciding which technique to use depends on the aspect of the process you’re focusing on—be it timing, responsibilities, decision points, or the flow of data.

Use Cases and Applications of Process Visualizations

When you explore the potential of process visualization, you’ll find its applications span across several fields, enabling clearer analysis and collaboration amongst teams.

From streamlining complex workflows to enhancing communication between stakeholders, let’s dive into some of the specific areas where these techniques shine.

1. Business Process Management (BPM)

In Business Process Management (BPM) , you can utilize tools like BPMN to map out your business process models. This aids in identifying inefficiencies and streamlining operations. Process mining techniques can extract information from event logs to give you insights into process performance.

Business Process Management (BPM) is all about optimizing the efficiency and effectiveness of the operational workflows within an organization.

- Streamlining Workflows : Visualizing business processes helps identify redundancies and bottlenecks, enabling organizations to streamline operations for better efficiency.

- Compliance and Standardization : BPM often requires adherence to certain standards and regulations. Process visualization ensures that all steps comply with the necessary guidelines and that best practices are followed consistently.

- Performance Monitoring : By creating a visual representation of workflows, managers can monitor process performance in real-time and make adjustments as needed to improve outcomes.

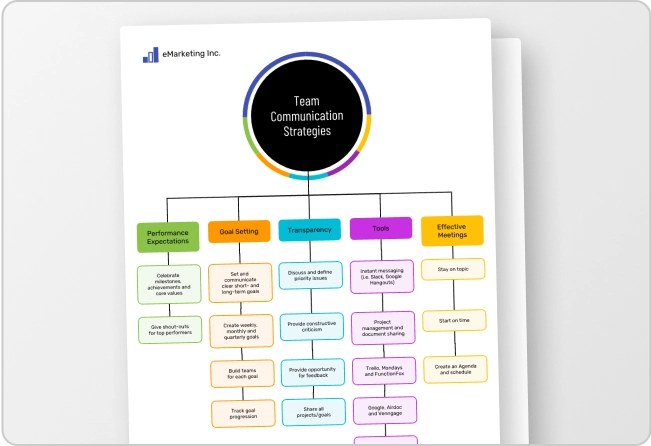

- Enhancing Collaboration : Process visualization in BPM fosters a shared understanding among team members, enhancing collaboration and ensuring that everyone is aligned with the process goals.

2. Human Resources (HR) Processes

Human Resources (HR) Processes benefit greatly from process visualization. Workflow diagrams help HR teams manage and optimize recruitment, onboarding, and employee development processes, ensuring that team members collaborate effectively.

In Human Resources (HR), process visualization can play a critical role in managing the various functions of the department.

- Recruitment and Onboarding : Visualizing the recruitment process helps HR to manage the various stages of hiring efficiently, from initial candidate screening to onboarding new employees.

- Employee Development : By mapping out career progression and development plans, HR can better guide employees on their growth paths within the organization.

- Performance Management : Visualizing performance review processes ensures fair and consistent evaluations, helping to identify areas for employee improvement and recognition.

- Policy Implementation : HR policies can be complex, and visualizing these processes helps ensure that they are implemented correctly and understood by all stakeholders.

3. Customer Experience and Journey Mapping

For Customer Experience and Journey Mapping , diagrams like customer journey maps turn abstract interactions into tangible visuals, allowing you to trace the customer’s pathway and improve their overall experience with data-driven decisions .

Customer experience is at the heart of any successful business, and journey mapping is a powerful way to visualize the entire customer experience process.

- Identifying Pain Points : By visualizing the customer journey, businesses can identify where customers may encounter issues or frustration, allowing for targeted improvements.

- Improving Conversion Rates : Understanding the paths that lead to conversions can help businesses refine their marketing strategies and sales funnels to improve overall conversion rates.

- Personalization : Mapping the customer journey allows for a more personalized experience, as businesses can tailor their interactions based on the specific needs and behaviors at different journey stages.

- Enhancing Customer Satisfaction : A visual map of the customer journey helps ensure that all touchpoints are designed to provide a positive experience, leading to increased customer satisfaction and loyalty.

4. Software Development and IT

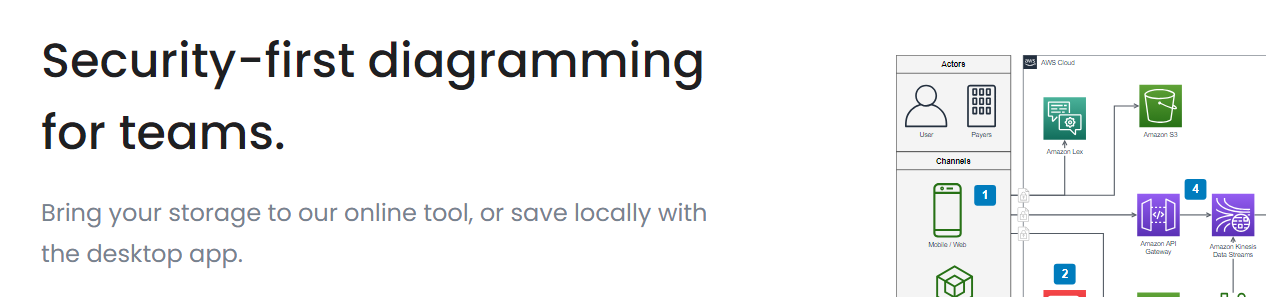

In Software Development and IT , detailed UML diagrams and data flow diagrams clarify complex software architectures and processes. These visuals aid development teams in understanding requirements and dependencies, thus streamlining software creation.

In the world of software development and IT, process visualization is key to managing complex projects and ensuring efficient, high-quality outcomes.

- Agile Workflow Visualization : Visualizing the stages of Agile methodologies, like Scrum or Kanban, helps teams stay on track with development cycles and manage tasks more effectively.

- Bug Tracking : A visual representation of bug reports and their statuses can help prioritize issues and streamline the debugging process.

- System Architecture Mapping : Visual diagrams of system architectures can help IT professionals understand the interactions between different components and manage system complexity.

- Change Management : When IT systems need to undergo changes, visualizing the process can help manage the transition smoothly, ensuring minimal disruption to services.

5. Operations and Production

For Operations and Production , particularly in sectors like automotive, process visualization is essential. It helps in the meticulous process planning and design necessary to maintain efficiency and quality in high-volume environments.

In the realm of operations and production, process visualization is crucial for managing complex systems and ensuring product quality and efficiency.

- Workflow Optimization : By mapping out production workflows, businesses can identify inefficiencies and optimize for speed and waste reduction.

- Quality Control : Visualizing quality checkpoints and feedback loops helps maintain high standards and reduces the likelihood of defects.

- Inventory Management : A visual representation of inventory levels and supply chain processes can help prevent overstocking or stockouts, leading to better inventory control.

- Safety Procedures : Clearly outlined safety procedures through visual aids can improve adherence to safety protocols and reduce workplace accidents.

6. Sales and Marketing Strategies

Sales and Marketing Strategies leverage process visuals to track customer journeys and marketing funnel progress. These visuals foster better understanding and coordination of marketing efforts and sales approaches.

For sales and marketing, process visualization offers a way to strategize, track, and optimize efforts for maximum impact.

- Lead Generation and Nurturing : Visualizing the lead generation and nurturing process helps sales and marketing teams understand the customer lifecycle and tailor their approach accordingly.

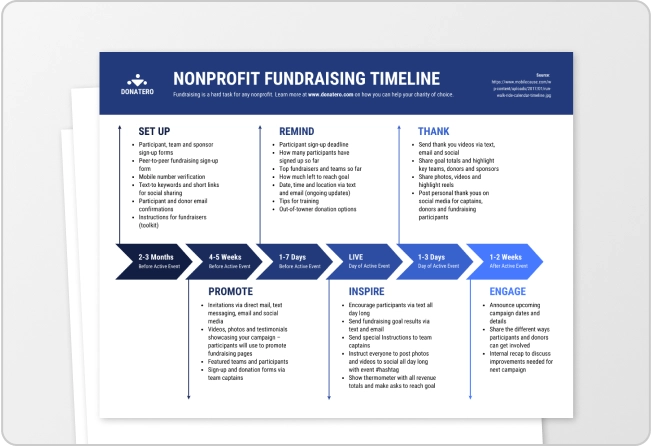

- Campaign Management : Mapping out marketing campaigns provides a clear overview of all activities and timelines, ensuring that each component is executed properly and on schedule.

- Performance Tracking : Visual dashboards displaying sales and marketing metrics allow teams to monitor performance in real-time and adjust strategies as needed.

- Market Analysis : Using visual tools to analyze market trends and consumer behavior can guide more informed decision-making and strategic planning.

7. Knowledge Management and Workshops

Lastly, in Knowledge Management and Workshops , the use of visuals aids in the generation and dissemination of ideas. Teams use collaborative diagrams to capture knowledge, share insights, and drive innovation in interactive settings.

Process visualization can be a powerful ally in this domain.

- Information Retention : Visual aids can help individuals better retain and recall information by presenting it in a more engaging and digestible format.

- Complex Concept Simplification : By breaking down complex ideas into visual diagrams or flowcharts, organizations can facilitate easier understanding among employees.

- Encouraging Knowledge Sharing : Interactive visual platforms can encourage employees to contribute their expertise, fostering a collaborative knowledge-sharing culture.

- Training and Development : Visual tools can enhance training programs by illustrating procedures and best practices, making learning more effective for new and existing employees.

Workshops are designed to be collaborative and interactive, and process visualization can significantly enhance their effectiveness.

- Idea Generation : Visual brainstorming tools can help participants better articulate and develop their ideas during workshops.

- Group Collaboration : Visualizing thoughts and workflows can help align group members, ensuring everyone is on the same page and working towards a common goal.

- Actionable Outcomes : By visually mapping out the steps needed to achieve workshop goals, participants can leave with a clear action plan.

- Feedback Visualization : Gathering and displaying feedback visually can help workshop facilitators and participants identify common themes and areas for improvement.

By integrating these visualization techniques into your operations across these various domains, you’ll facilitate better communication, enhance understanding, and streamline your workflows.

Techniques and Methodologies to Visualize Processes

In your endeavors to enhance workflow efficiency, you’ll come across various techniques and methodologies for process visualization. They are the backbone of how you analyze, understand, and improve your processes. Let’s dive into some specific strategies that you can use.

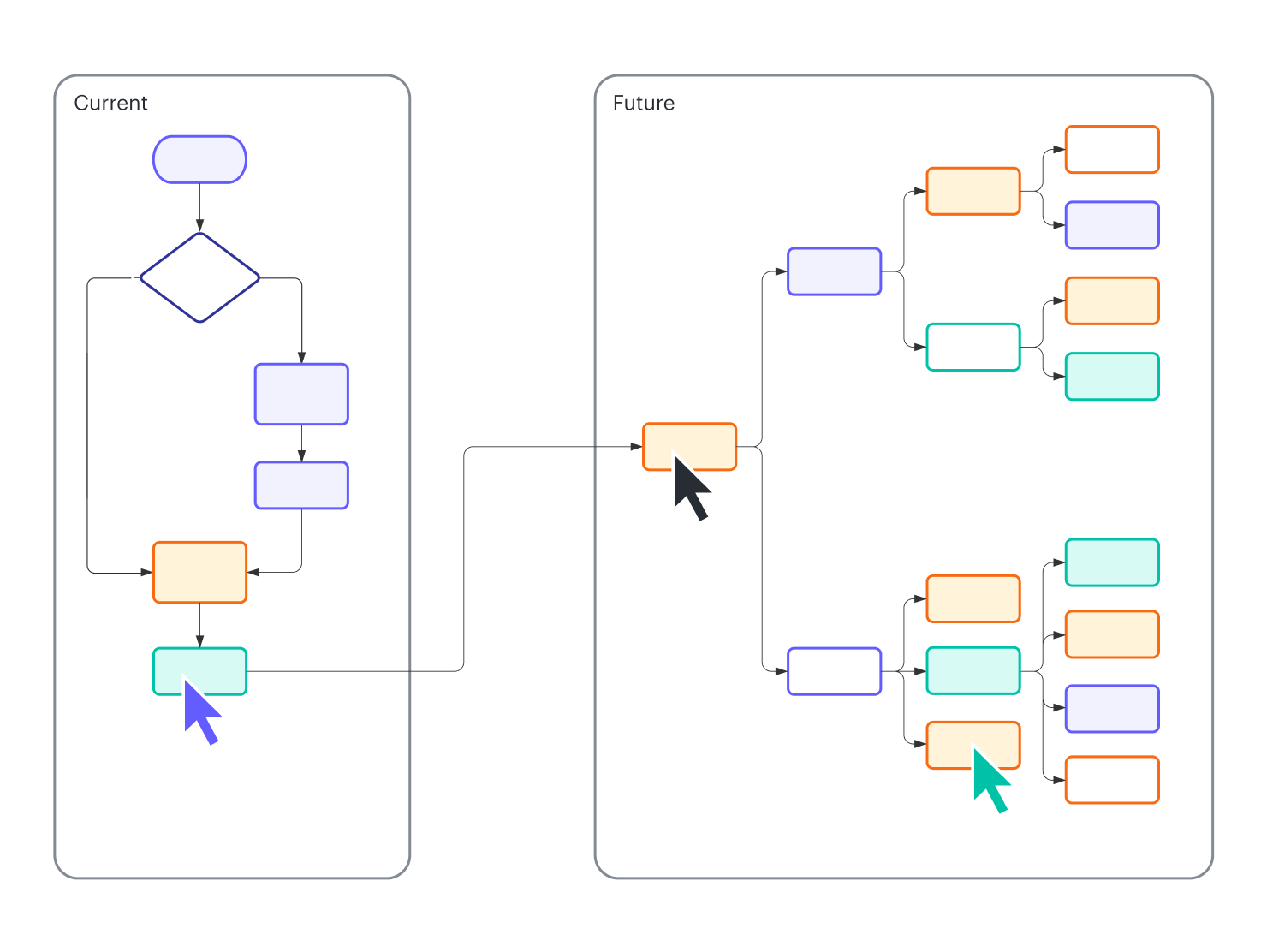

Process Mapping Techniques

Creating a process map is the first step in visualizing your work processes. This visual representation is done using different techniques which include:

- Linear Process Maps: These illustrate the sequence of steps as they occur in a process.

- Cross-Functional Process Maps: These highlight the interactions between different teams or systems .

- Value Stream Mapping (VSM): VSM identifies inefficiencies and visualizes the flow from start to finish focusing on value creation.

Each technique serves the purpose of identifying critical elements such as bottlenecks and areas for process improvement .

Analysis and Improvement Methods

After process mapping , the next steps are:

- Process Analysis: It involves a thorough review of the process flows to spot inefficiencies and areas to standardize.

- Continuous Process Improvement: You will iteratively refine your processes to enhance efficiency and productivity.

Your team ‘s collaborative efforts in analysis and implementing improvements are vital for successful outcomes.

Documentation and Standardization

Documentation is key for:

- Process Standardization: Ensuring everyone adheres to the best practice

- Record Keeping: Maintaining a clear and updated description of processes

Proper process documentation ensures consistency and provides a foundation for training and knowledge transfer within your team.

Risk Assessment and Compliance

Employ process risk assessment techniques to:

- Evaluate potential risks within your process flows

- Ensure compliance with legal and industry standards

This step is crucial in protecting your organization from unforeseen threats and maintaining operational integrity.

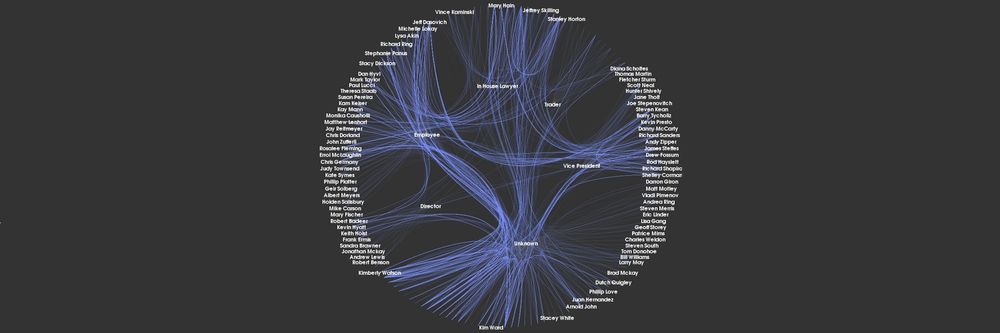

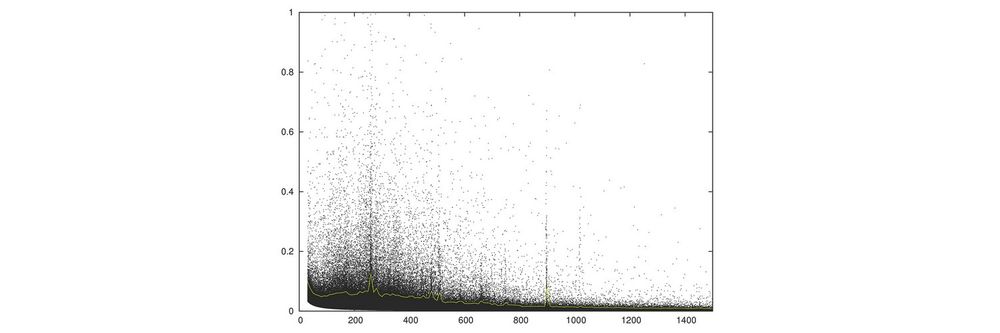

Process Mining

Process mining is a bridge between data mining and business process management. It’s a method that uses specialized algorithms to analyze event logs recorded by an organization’s IT systems.

These logs contain the digital footprints of various business processes, capturing events like the start and end of a task, the user performing it, and the timestamp of each action.

Here’s what process mining involves and how it can be beneficial:

- Discover : Process mining software reconstructs the actual processes in real time by piecing together the sequence of events from start to finish. This can help you discover the most common pathways through a process and identify unexpected behavior.

- Enhance : By visualizing the flow of processes, you can pinpoint bottlenecks, redundancies, and deviations from the intended process flow. This information is invaluable for enhancing efficiency and compliance with standards or regulations.

- Optimize : With the insights gained from process mining, organizations can optimize their processes by eliminating inefficiencies and standardizing best practices across the board.

Image source: Celonis Process Mining

Visual Tools and Software

When you’re looking to visualize your business processes effectively, selecting the right tools is crucial. These software solutions can help you create flowcharts, facilitate team collaboration, and automate your process designs for improved productivity.

Diagramming and Mapping Tools

Lucidchart is a popular choice for creating diagrams and process maps . It allows you to visualize sequences and relationships within your business processes accurately.

You can draft detailed flowcharts to outline procedures, making complex data and workflows easier to comprehend and communicate.

Example of a flowchart in Lucidcharts

Collaboration and Productivity Platforms

For visual collaboration and productivity, platforms such as Miro and ClickUp offer versatile spaces to work together with your team.

These tools enable real-time collaboration on visual elements, helping to align team members on process planning and design.

Simulation and Modeling Software

Simulation and modeling software take your process visualization a step further. They not only depict the steps but also allow you to run hypothetical scenarios and predict outcomes.

This hands-on approach to process visualization aids in optimizing and refining your workflows before implementation.

Automation and Process Design Tools

Integrating automation into your process design can save you time and reduce errors. Tools like Zapier Canvas allow for automated process visualization , turning repetitive tasks into automated sequences.

This can streamline how your processes are visualized, designed, and executed, elevating your business’s efficiency.

Case Studies and Real-World Examples of Process Visualization

In the dynamic world of business, leveraging process visualization has proven pivotal in enhancing efficiency and compliance across various industries. Each sector, from healthcare to financial services, faces unique challenges and employs process visualization to meet these head-on.

Healthcare Process Optimization

In healthcare, you can see a transformation when process visualization aids in patient flow optimization . Imagine reducing wait times and improving patient care by identifying bottlenecks within emergency departments.

By visualizing patient pathways, healthcare providers can pinpoint problem areas fast and implement targeted process improvements to ensure a smoother customer journey through the care cycle.

Retail Workflow Streamlining

Retailers have harnessed process visualization to streamline workflows and enhance the customer shopping experience.

By mapping out the entire retail operations, from inventory management to point-of-sale interactions, you can identify delays that frustrate customers and act swiftly to rectify them. The result? A more efficient retail workflow that directly translates to happier customers and potentially higher sales.

Manufacturing Efficiency Enhancements

In manufacturing, the pressure to maintain operational efficiency while minimizing costs is constant. Through process visualization, uncovering inefficiencies in the production line becomes significantly easier.

You’re enabled to take swift action, applying automation where necessary to enhance the process flow and maintain a competitive edge with continuous manufacturing efficiency .

Financial Process Compliance

In the financial sector, adhering to compliance regimens is not just good practice—it’s the law. By visualizing processes, financial institutions can oversee and audit complex compliance procedures , ensuring no step is missed.

Better yet, this level of clarity supports improved decision-making, leading to more robust operations that can adapt to legislative changes more effectively.

Visualize and Gain Insights From Processes: The Essentials

Process visualization is more than just a set of tools—it’s a transformative approach that can illuminate the inner workings of various aspects of your business.

From enhancing customer experiences to streamlining software development, from optimizing operations to strategizing sales and marketing efforts, and from enriching knowledge management to facilitating productive workshops, the applications are as diverse as they are impactful.

Key Takeaways: Use Cases For Process Vizualizations

Key takeaways of process visualization use cases include:

- Enhanced Clarity : Visualizing processes makes complex data and workflows easier to understand at a glance, leading to better communication and decision-making.

- Improved Efficiency : By identifying bottlenecks and redundancies, process visualization helps streamline workflows, saving time and resources.

- Data-Driven Decisions : Visual tools provide a factual basis for analysis, ensuring that decisions are informed by data rather than intuition.

- Operational Excellence : In operations and production, visualization can lead to improved safety, quality control, and inventory management.

- Knowledge Sharing : Process visualization in knowledge management promotes a culture of shared understanding and continuous learning.

Remember, the power of process visualization lies in its ability to turn abstract data into tangible insights, fostering an environment where continuous improvement is not just a goal but a reality.

FAQ: Visualize Processes Use Cases

How does process visualization improve decision-making in business.

Process visualization aids decision-making by providing a clear representation of work processes, which helps in identifying inefficiencies and making informed improvements. It’s a strategic tool that facilitates a comprehensive understanding of workflows, resources, and potential bottlenecks.

What are the key benefits of using process visualization in project management?

Using process visualization in project management enhances transparency, improves communication among team members, and allows for more effective coordination. It helps you better manage timelines and resource allocation, leading to more successful project outcomes.

What tools are commonly used for visualizing complex processes?

There are various tools for visualizing complex processes, such as flowcharts, Gantt charts, and specialized software like BPM (Business Process Management) platforms. These tools streamline the creation and analysis of detailed process diagrams.

How can process mapping techniques improve operational efficiency?

Process mapping techniques provide a framework for analyzing and optimizing each step within a business process. By clearly outlining tasks and sequences, they make inefficiencies more apparent, thus paving the way for streamlining and improving overall operational efficiency.

In what ways can outcome visualization affect project outcomes?

Outcome visualization sets clear expectations by depicting the desired end state or results of a project. This visual approach aligns all stakeholders on objectives and can motivate and guide teams towards achieving set goals, positively impacting project outcomes.

Can you highlight best practices for effectively mapping business processes?

When mapping business processes, it’s best to start with a clear objective, involve cross-functional teams, use standardized symbols and notations, and regularly update the maps as processes evolve. This ensures that the maps remain accurate tools for analysis and communication.

Meet Eric, the data "guru" behind Datarundown. When he's not crunching numbers, you can find him running marathons, playing video games, and trying to win the Fantasy Premier League using his predictions model (not going so well).

Eric passionate about helping businesses make sense of their data and turning it into actionable insights. Follow along on Datarundown for all the latest insights and analysis from the data world.

Related Posts

Exploring The Benefits of Process Mining Tools

Process Mining as a Service (PROMaaS): Your Complete Manual

9 Hands-On Business Use Cases For Process Mining

- Product overview

- All features

- Latest feature release

- App integrations

CAPABILITIES

- project icon Project management

- Project views

- Custom fields

- Status updates

- goal icon Goals and reporting

- Reporting dashboards

- workflow icon Workflows and automation

- portfolio icon Resource management

- Capacity planning

- Time tracking

- my-task icon Admin and security

- Admin console

- asana-intelligence icon Asana AI

- list icon Personal

- premium icon Starter

- briefcase icon Advanced

- Goal management

- Organizational planning

- Campaign management

- Creative production

- Content calendars

- Marketing strategic planning

- Resource planning

- Project intake

- Product launches

- Employee onboarding

- View all uses arrow-right icon

- Project plans

- Team goals & objectives

- Team continuity

- Meeting agenda

- View all templates arrow-right icon

- Work management resources Discover best practices, watch webinars, get insights

- Customer stories See how the world's best organizations drive work innovation with Asana

- Help Center Get lots of tips, tricks, and advice to get the most from Asana

- Asana Academy Sign up for interactive courses and webinars to learn Asana

- Developers Learn more about building apps on the Asana platform

- Community programs Connect with and learn from Asana customers around the world

- Events Find out about upcoming events near you

- Partners Learn more about our partner programs

- Support Need help? Contact the Asana support team

- Asana for nonprofits Get more information on our nonprofit discount program, and apply.

Featured Reads

- Project management |

- Workflow diagram: Symbols, uses, and ex ...

Workflow diagram: Symbols, uses, and examples

A workflow diagram provides a visual overview of a business process or system. These diagrams help team members easily visualize their goals and deadlines, preventing potential bottlenecks. Find out how to create one of your own.

A workflow diagram can help prevent project deviations and bottlenecks by communicating goals and deadlines in a visual way. Whether you use a workflow diagram when onboarding new hires or to streamline use cases and testimonials, it’s a great way to visualize tasks and data flows.

From what it is to how to create one of your own, we’ve put together some of the most important facts to know about workflow diagrams and included helpful examples.

What is a workflow diagram?

A workflow diagram—also known as a workflow chart—provides a graphic overview of a business process or system. Usually, you’ll use these diagrams to visualize complex projects after you’ve completed the initial research and project planning stages.Once you’ve created a workflow diagram, you will have a detailed view of high level tasks and dependencies based on the overall project timeline and objectives.

Workflow diagram compared to other process mapping

Workflow diagrams share many aspects of other diagrams in the UML (Unified Modeling Language)—a standard language for specifying, visualizing, constructing, and documenting the artifacts of software systems. But workflow diagrams differ slightly from other process mapping and UML diagrams. Here, we compare them to some common types to show how:

Business process mapping

Workflow diagrams are closely related to business process mapping . The difference between the two is that a process map typically outlines steps in detail while a workflow diagram gives a visual representation of them. The objective of a workflow diagram is to help team members understand their tasks, objectives, and roles and responsibilities within the project at a high level.

Process flowcharts

Workflows and flowcharts are often confused. While the two terms sound similar, a workflow is just one type of flowchart . You can also use flowcharts to visualize other processes, like PERT charts and process documentation .

Activity diagrams

Activity diagrams are another type of flowchart that outline the flow of a series of activities within a system. It’s used to translate a business system’s functions into more digestible information for those who don’t understand the backend workings as much. In other words, an activity diagram is an easy way to visualize technical processes. For example, in Asana , you could draw an activity diagram to create a project as follows:

User clicks the button to create a project

New project launched

User customizes the project with different names and features

User saves the project and updates when needed

Data flow diagrams

Data flow diagrams follow the data through an operating system or process, whereas workflow diagrams follow the work itself. Instead of inputting actions, for example, in a data flow diagram you’d enter in metrics, results, or other data points that you want to portray.

When to use a workflow diagram

A workflow diagram is a visual representation of a process, either a new process you’re creating or an existing process you’re altering. For example:

A process to streamline your ecommerce customer journey.

A project to increase customer retention and satisfaction.

A process to automate and optimize manual tasks involving customer data.

A workflow diagram comes between the business process map (which you’ll create before the project starts) and business process automation (which you’ll use to optimize and streamline processes). This is because your map provides detailed process steps that stakeholders need to begin work, while a workflow diagram is a high level visual representation that can help clarify overarching goals during the process.

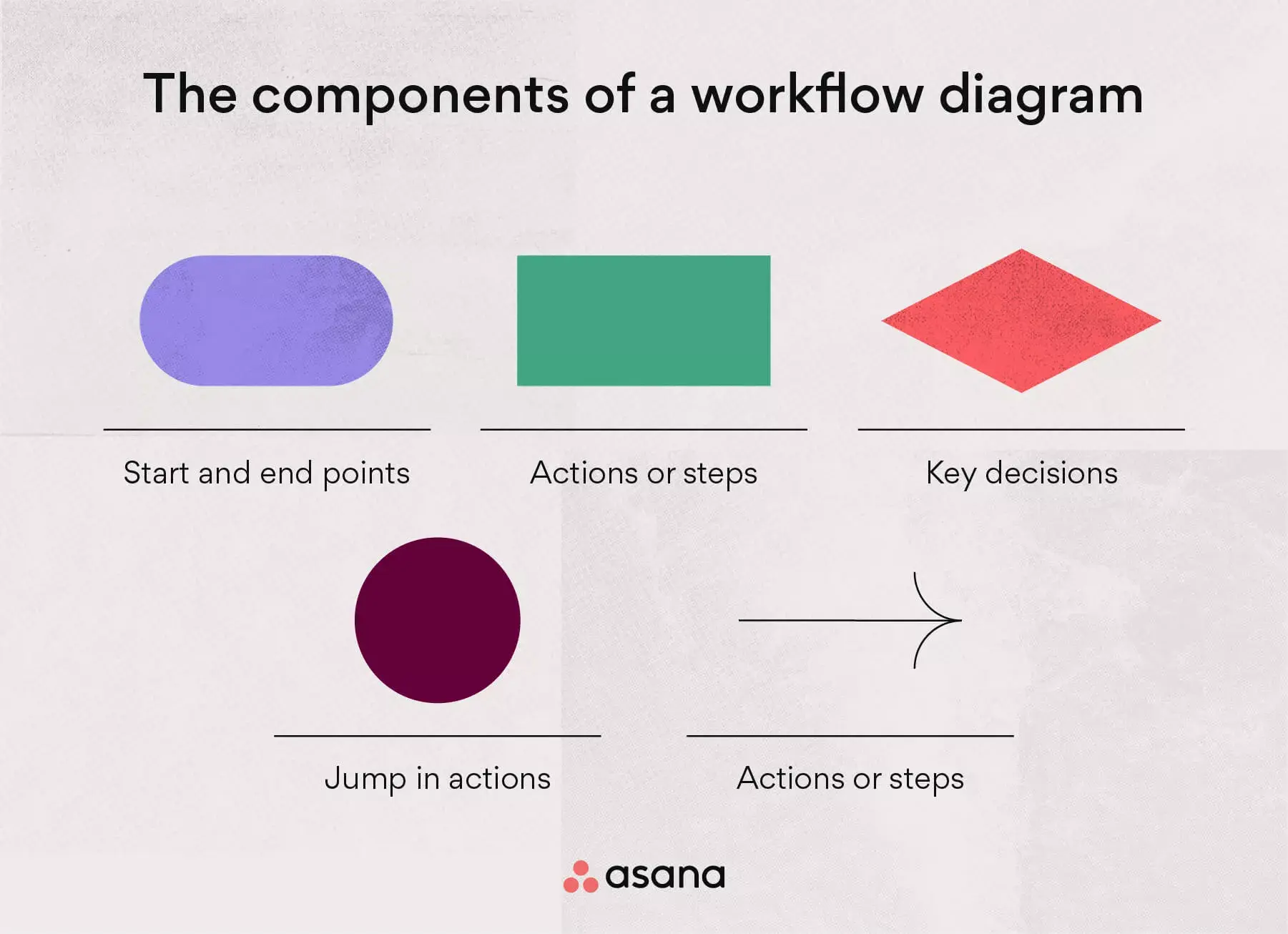

The components of a workflow diagram

In order to understand how a workflow diagram works, you first need to understand the components that make up a workflow. These include inputs, outputs, and transformations, which all help to communicate deliverables in as little time as possible.

Once you understand these components, you’ll be able to properly read a workflow process diagram and create one of your own. The main components of a workflow diagram include:

Inputs: An action that impacts the following step

Transformations: An input change

Outputs: The outcome after the transformation

These components are visualized by shapes and arrows, including:

Ovals: Represent the start and end points of a process.

Rectangles: Represent instructions about actions and steps.

Diamonds: Represent key decisions during the process build.

Circles: Represent a jump in actions and may indicate steps to bypass (in certain situations).

Arrows: Connectors that represent the dependency between all shapes and actions.

Together, these components instruct the reader how to follow the correct path and achieve the desired outcome.

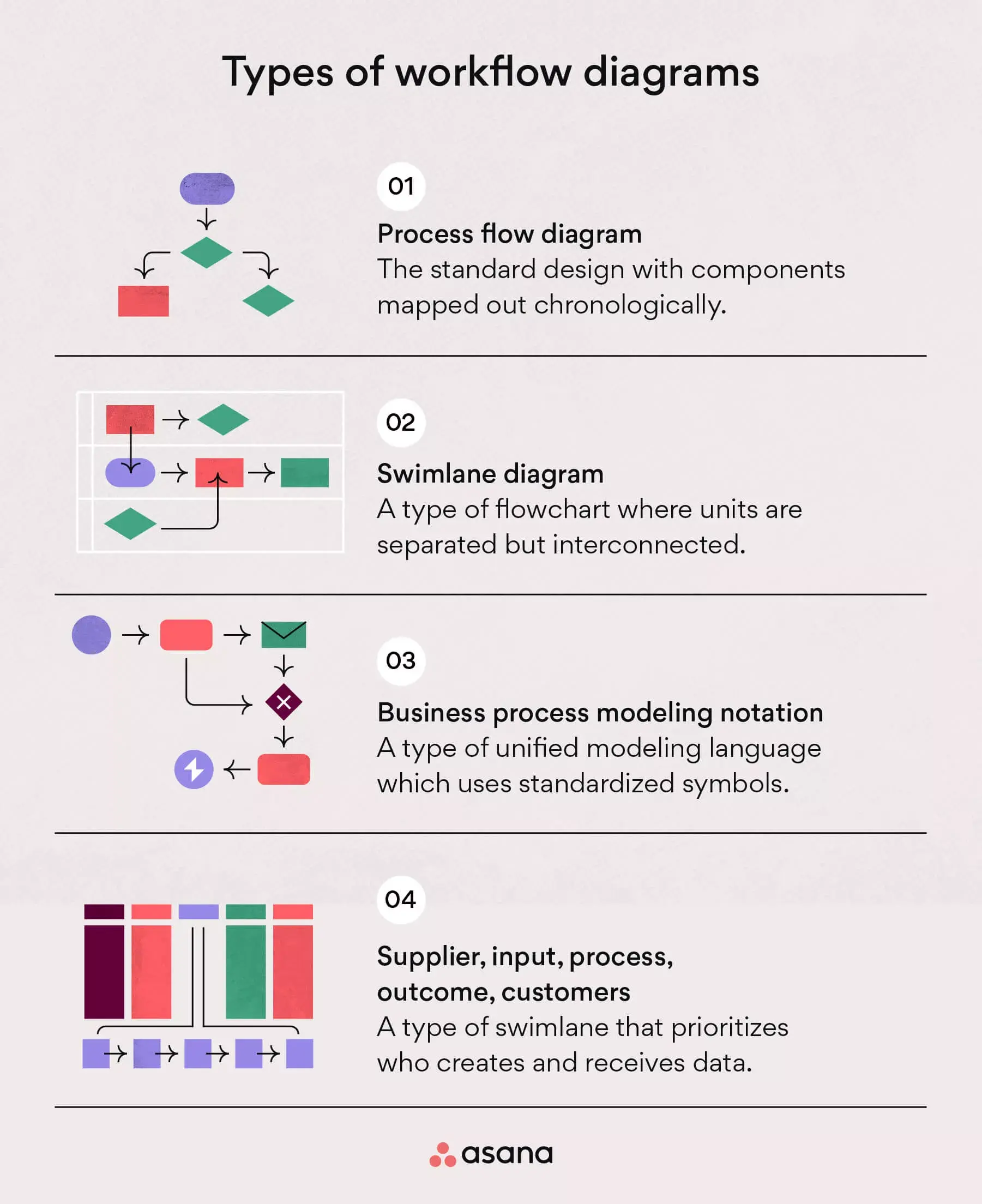

Types of workflow diagrams

When it comes to visualizing processes, there are a few different workflow diagram formats that you can choose from. Each one offers unique advantages that can help you map out your next process. The type of diagram you choose will depend on the process you’re working on and your needs for that process.

From process flows to swimlanes, here are the four different types of workflow diagrams you can use for your workflow analysis.

1. Process flow diagram

A process flow diagram tool is the standard design for workflows. In this diagram, all components are mapped out chronologically, making it a basic visual representation of a process. This type of diagram provides a general overview of individual tasks and objectives without getting into too much detail.

Best for: Teams that want a high level visual representation of a new process that is quickly comprehended by any stakeholder or department.

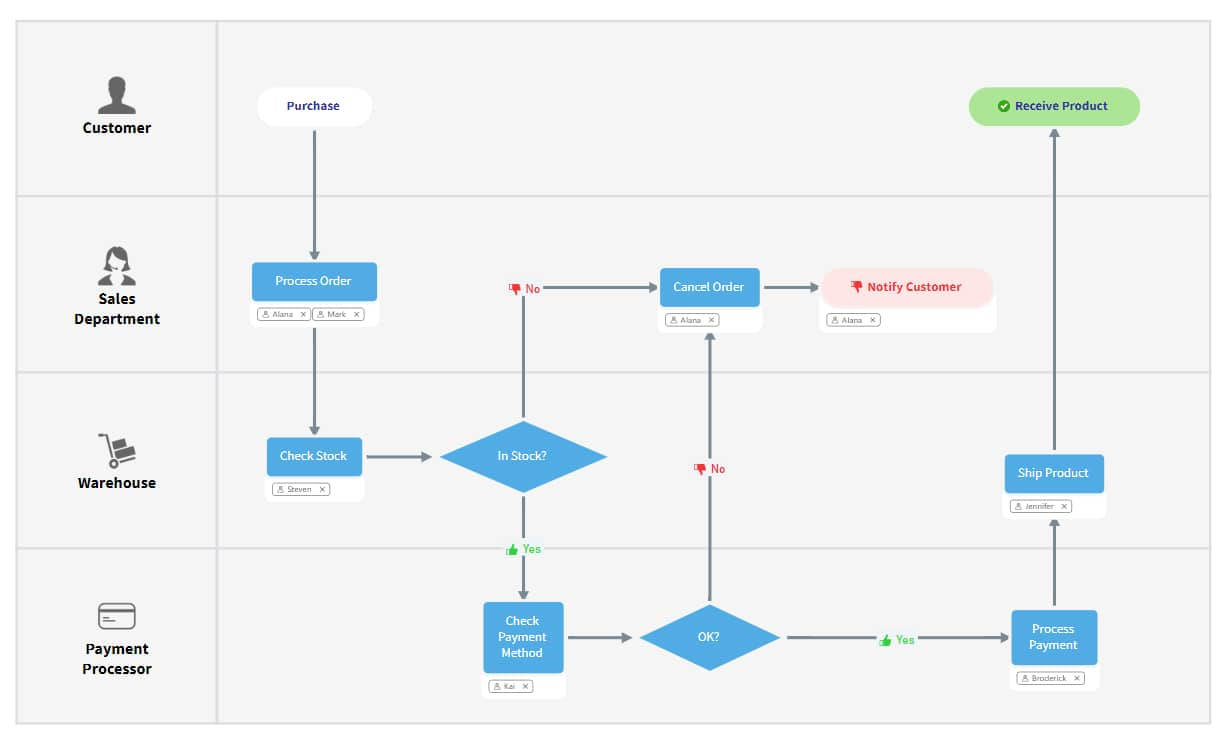

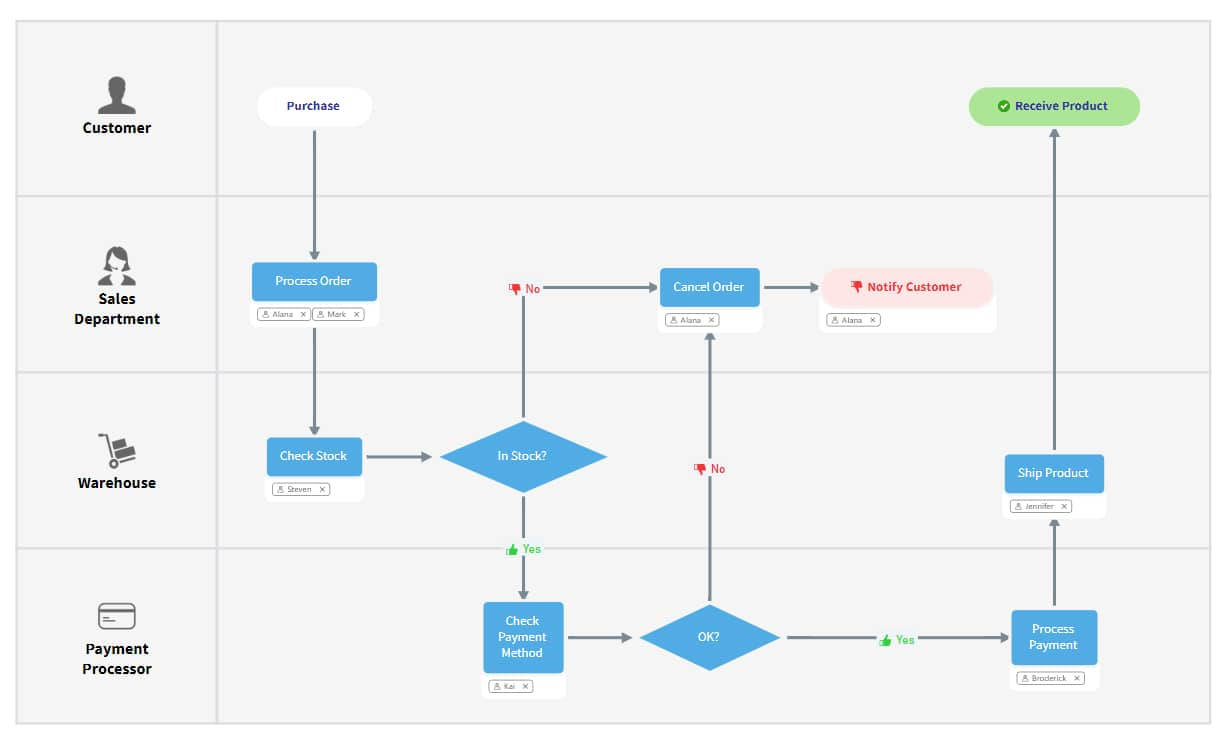

2. Swimlane diagram

A swimlane diagram is also a popular workflow layout, though swimlanes differ quite significantly from process flow diagrams. A swimlane diagram breaks down your workflow into smaller flows or units. These flows are interconnected but separated to highlight interactions and possible inefficiencies. This creates visibility and offers a deeper dive into the overall process workflow.

Best for: Teams working on complex processes with many layers that are interrelated but independent.

3. Business process modeling notation (BPMN) diagram

BPMN uses uniform notations that both business and technical stakeholders can easily interpret. It is a type of unified modeling language which uses standardized symbols to communicate different steps.

BPMN diagrams focus on the information that is received internally and how that information is interpreted. This is why it's most commonly used for internal process changes that don't impact external customers.

Best for: Teams working on process improvements in different departments.

4. Supplier, input, process, output, customers (SIPOC) diagram

SIPOC is a type of swimlane diagram that focuses on analyzing multiple different parts of a workflow.

Unlike a traditional diagram that organizes data in sequential order, a SIPOC diagram prioritizes who creates and receives the process data. SIPOC focuses on how the data is being received internally as well as externally which is why it's used for processes associated with customer experience.

Best for: Teams looking to focus on how data is being received internally and externally.

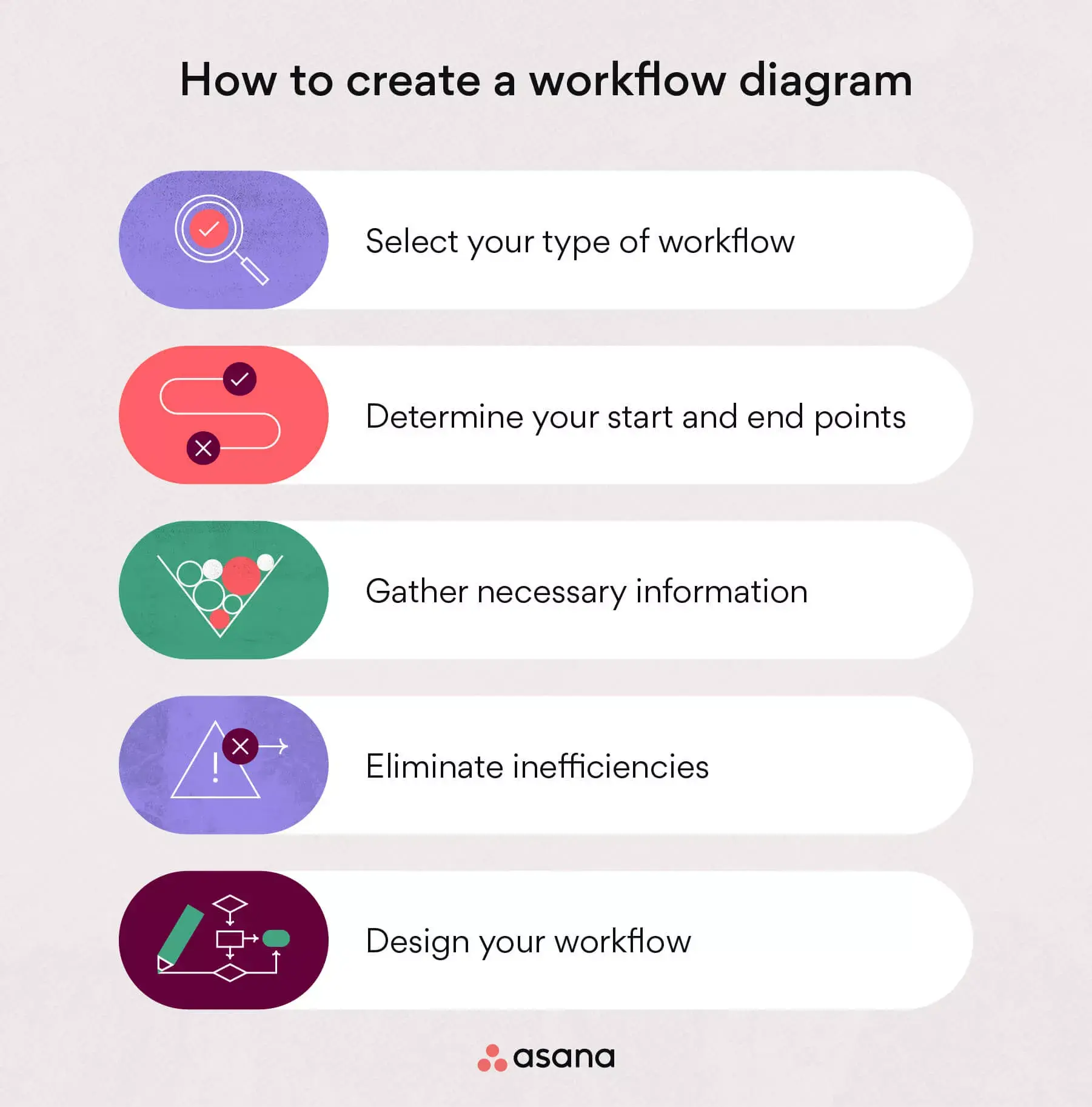

How to create a workflow diagram (with example)

To create a workflow diagram, begin putting together the main components of your process. To do this, bring together your inputs, outputs, transformations, and your main process deliverables.

Map workflow components out on your diagram by using arrows, circles, rectangles, ovals, and diamonds to represent each data point.

1. Select your type of workflow

To select the workflow type that’s best for you, consider the functions needed for your process. Is it a complex process with multiple stakeholders best fit for a swimlane diagram? Or is it a relatively simple process that’s best suited for a simplistic process flow diagram?

While you can adjust your workflow as you go, it's easier to decide on the type of workflow up front. This way, you know exactly how complex or simple your workflow is.

2. Determine your start and end points

Next, determine your workflow start and end points (represented by ovals on your diagram).

To determine these points, consider when your process begins and when it ends. Is there an action that triggers the process? Likewise, is there an action or step that ends the process? These data points will help effectively communicate when the process begins and ends.

3. Gather necessary information

To gather information, connect with stakeholders to understand each piece of the process. This may include a kickoff meeting with various departments and leaders to gather the details and approvals needed to begin constructing your workflow diagram.

Since each process differs, the information you need to gather will also vary. Consider the steps required to complete the process, the stakeholders who will be involved, and any other significant details that will help inform readers.

4. Eliminate inefficiencies

The final step before constructing your visual workflow is to consider and eliminate any inefficiencies that may arise. Make sure you analyze inefficiencies before designing your workflow so you can prevent any issues—rather than dealing with them in real time. The specific inefficiencies will vary, but they can include a lack of resources, issues with product development, or any other obstacle that could arise during the process.

Document these inefficiencies in a change log under your change control process . This way you will be able to communicate these problems to stakeholders, prioritize inefficiencies, and track whether they've been resolved.

5. Design your workflow

Finally, begin constructing your workflow. Gather the unit information, data points, and efficiencies and map them on the diagram you chose in step one. Since each process is different and each diagram is constructed differently, yours will likely be unique in its design. Here’s just one example of what a workflow diagram might look like:

Once your workflow is designed, review it with your stakeholders to ensure it's accurate and appropriate for the situation. This is a great way to ensure all inefficiencies have been accounted for and resources have been specified properly.

Use workflows to map out processes

Visualizing workflows can help you effectively communicate deliverables to stakeholders and leadership. Plus, it’s a great way to align multiple different departments on a given process.

To take your workflows one step further, try workflow management software. From task automation to streamlined communication, Asana can help.

Related resources

Provider onboarding software: Simplify your hiring process

8 steps to write an effective project status report

Inventory management software: How to control your stock

Timesheet templates: How to track team progress

We use essential cookies to make Venngage work. By clicking “Accept All Cookies”, you agree to the storing of cookies on your device to enhance site navigation, analyze site usage, and assist in our marketing efforts.

Manage Cookies

Cookies and similar technologies collect certain information about how you’re using our website. Some of them are essential, and without them you wouldn’t be able to use Venngage. But others are optional, and you get to choose whether we use them or not.

Strictly Necessary Cookies

These cookies are always on, as they’re essential for making Venngage work, and making it safe. Without these cookies, services you’ve asked for can’t be provided.

Show cookie providers

- Google Login

Functionality Cookies

These cookies help us provide enhanced functionality and personalisation, and remember your settings. They may be set by us or by third party providers.

Performance Cookies

These cookies help us analyze how many people are using Venngage, where they come from and how they're using it. If you opt out of these cookies, we can’t get feedback to make Venngage better for you and all our users.

- Google Analytics

Targeting Cookies

These cookies are set by our advertising partners to track your activity and show you relevant Venngage ads on other sites as you browse the internet.

- Google Tag Manager

- Infographics

- Daily Infographics

- Popular Templates

- Accessibility

- Graphic Design

- Graphs and Charts

- Data Visualization

- Human Resources

- Beginner Guides

Blog Graphs and Charts Process Mapping: Everything You Need to Know [+ Templates]

Process Mapping: Everything You Need to Know [+ Templates]

Written by: Jennifer Gaskin Feb 23, 2022

Process mapping is a business and management tool organizations can use to create a visual depiction of internal or external business processes. Process maps can be used to train workers, identify bottlenecks in processes and improve communications.

While process mapping may seem complicated, it’s really not. With Venngage’s Flowchart Maker , it’s easy to visualize and organize your business processes into professional, easy-to-follow process flow maps. Let’s learn more about process mapping and explore some process flowchart templates to get you started.

Click to jump ahead

What is process mapping?

What are some examples of process mapping, what are the benefits of process mapping.

- What are the types of process maps?

How can I create process maps for a business?

Process mapping faqs.

Process mapping or business process modeling is a technique used in many businesses and organizations to create a visual representation of a workflow or process.

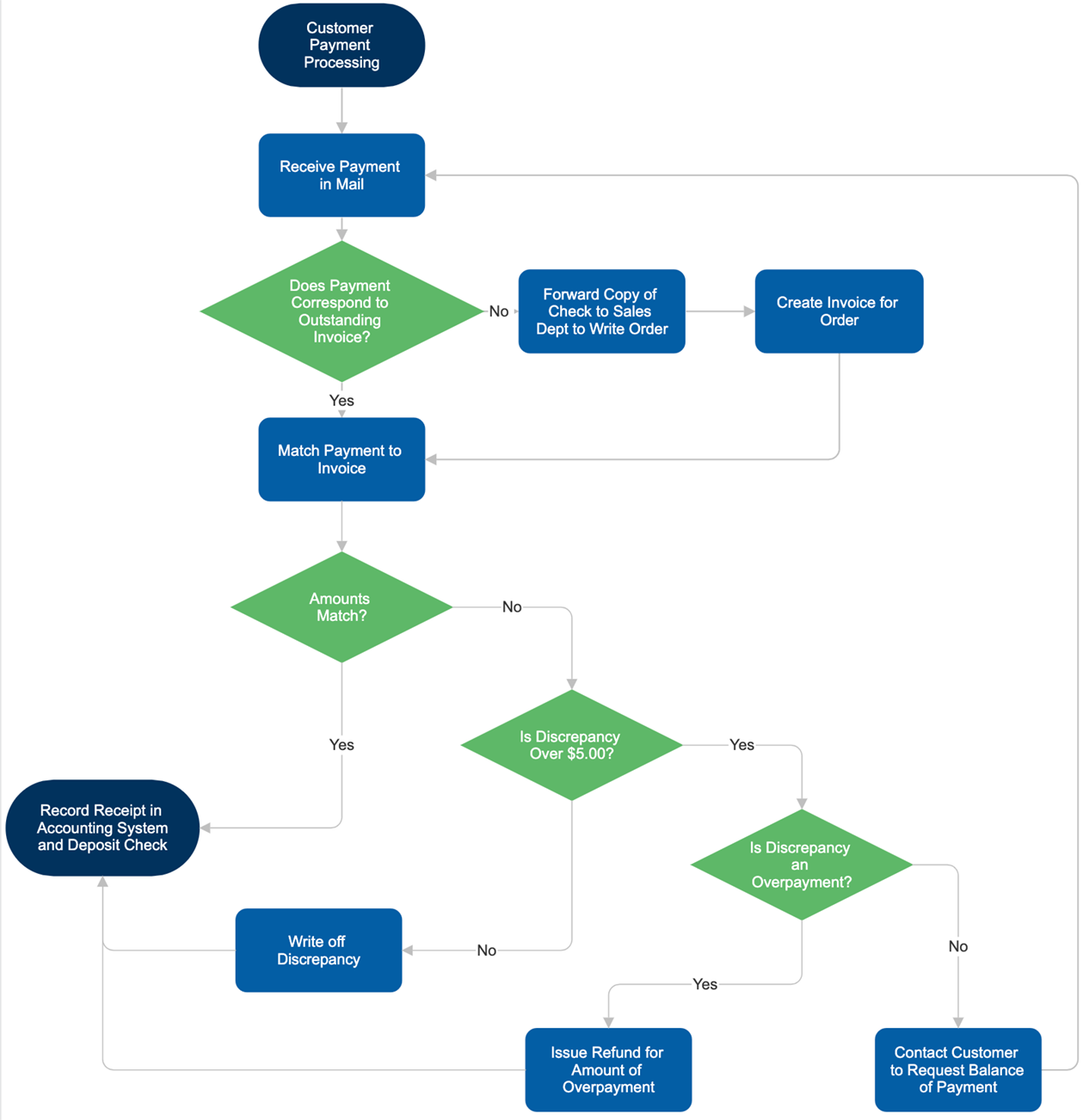

This business process map, for example, describes the employee involuntary termination process:

Related : How to Diagram a Business Process [Process Diagramming Templates]

Process mapping can apply to individual workers, departments or the business as a whole. Because it has such varied applications, its purpose can also vary from simply helping people understand how tasks get done to the complex work of overhauling processes that aren’t as efficient as they should be.

For example, this process map visualizes the entire process of troubleshooting slow servers. The arrows and lines give IT team members a clear set of steps to follow to make sure their customers’ systems are up and running.

Instead of providing team members with specific tasks required to get their jobs done, this process map was built to visualize the proposal process. So, instead of detailed descriptions of actions an individual worker must take, it describes actions that may or may not be taken by entire teams or departments.

In addition to visualizing the steps in a process, many organizations use process mapping to better understand how different departments might interact. In this example, each box is color-coded to a department; the effect is that it’s immediately apparent which team is responsible for which tasks.

Another example is this swimlane process map that describes the customer service process as well as showing the interaction between different teams:

Some process maps will flow in a progressive manner, meaning one task is done and then another and so on. But many businesses have operations that require many individual tasks to be done simultaneously, sometimes by separate departments, though not always. As this business process map shows, it’s still possible to create an effective process flowchart when tasks must be done at the same time.

Let’s take a look at some more process map examples to help illustrate the diversity of applications this management tool has.

This simple flowchart illustrates one of the biggest benefits of process mapping for businesses, which is training and onboarding. This process flowchart shows in detail every step involved in taking the order of a restaurant customer.

Of course, process maps can get much more complicated than taking a diner’s order, as this process flowchart shows. In this case, a disciplinary process is illustrated and explained, but it could apply to any process in which a series of decisions must be made.

Interested in using process maps to make your organization better? Check out our flowchart examples designed to improve processes.

As we’ve explored, one of the major uses for process maps is to ensure consistency and quality of work. This particular example applies to a customer service help desk , but your company could use a template like this to ensure any process is standardized.

Flowcharts and process maps aren’t the only diagrams that could be useful for your business. Learn how to use some of the most popular ones.

Process maps aren’t just good for decision-making in which multiple options are possible. They can also help organizations develop new tools and procedures, as this flowchart illustrates.

The bigger the task, the more complex it’s likely to be. That’s why process mapping often covers a vast array of tasks and departments that all flow into one another until the process is complete. As this process map shows, it’s often common for tasks to be done simultaneously across the business.

Business process mapping has a host of benefits, the most common ones include:

- Insight into current processes and workflows

- Potential to identify bottlenecks and inefficiencies

- Employee training and onboarding

- Process improvement, including quality and consistency (learn more about process documentation )

- Improving communication inside and among departments

- Identifying opportunities for process automation

- Showing compliance with applicable laws and regulations (e.g. visualize HR compliance checklists )

What are types of process maps?

Depending on what you want to communicate, you can choose different types of process maps to visualize your business processes. The most common ones include:

Basic flowchart

The simplest form of a process map is a basic flowchart covering the inputs and outputs or the steps of a process, such as this example about the customer support process:

For more examples of flowcharts, check out:

- 21+ Flowchart Examples to Organize Projects and Improve Processes

- 20+ Flow Chart Templates, Design Tips and Examples

Swimlane (Cross-functional) map

A swimlane map (also called swimlane flowchart, swimlane diagram or cross-functional diagram) visualizes a process or a workflow that’s spanning across different departments.

Phases of the workflow are usually read from left to right or top to bottom, and distinct teams are displayed in rows or columns.

Here’s an example of a swimlane flowchart describing the labor contract process and how it stretches across different departments of a company:

Detailed process map

As its name suggests, a detailed process map provides a closer look at each step of the process.

This process flowchart shows a more complex customer service process, but the use of the map goes a long way toward simplifying it. It’s easy to see at a glance how information or tasks flow and which areas of the business are responsible for each one.

High-level process map

On the other hand, a high-level process map is a high-level visual representation of a process and often looks at the relationship or interactions between SIPOC (Supplier, Input, Process, Output, Customer).

This flowchart examines in more detail what SIPOC refers to:

Value stream map

A value stream map is a lean-management tool that visualizes the process of bringing a product to its customer. It often has a unique set of symbols to illustrate the flow of information:

Workflow diagram/ Workflow map

A workflow diagram or a workflow map visualizes a process in a “flow” format:

Creating a process map for your business may seem like an intimidating prospect. But by following a few simple steps, you can create a tool for visualizing and understanding how your organization works.

Step 1: Identify the process to be mapped; in some cases, the process will cross over multiple departments. If so, it’s wise to note this before you begin actually creating the map in a later step.

Step 2: Break the process down into individual steps. How detailed you need to get depends on the process at hand.

Step 3: Use a template or create your process map from scratch using Venngage. Our smart process flowchart diagrams make it simple and easy to reorder steps, add or remove steps and apply colors and fonts to enhance understanding.

Looking for more examples of flowcharts you can use for your business? Here are 20-plus examples of flowcharts to get you started.

Do you have more questions about process mapping, how it works and how it can be used in your business? I’ve got answers.

What is process mapping in operations management?

Operations management is one of the areas in business that can benefit the most from process mapping. That’s because one of this department’s most common tasks is improving how a business functions, which can be done with the visual help of a process map.

What is process mapping in Six Sigma?

There are many schools of thought in the business world that make use of process mapping; one of the most popular is Six Sigma, a process improvement method that is highly regarded in manufacturing and management. Six Sigma process maps can be quite simple, as this Lean Six Sigma (a type of Six Sigma program) process flowchart illustrates.

How is process mapping used in business?

Process mapping has many applications in business, including training and onboarding, process improvement, communication and team building and much more.

Gain valuable insights with an engaging and informative process map

Organizations big and small can benefit from the use of process maps to help them visualize what they’re doing right (and wrong), get new team members up to speed and boost internal communication, among other benefits. With Venngage’s Process Flowcharts , just a few clicks can have you on your way.

Discover popular designs

Infographic maker

Brochure maker

White paper online

Newsletter creator

Flyer maker

Timeline maker

Letterhead maker

Mind map maker

Ebook maker

Get work done right, and right-on-time with our industry leading BPM platform.

19 Best Process Mapping Tools to Visually Manage Work

In the dynamic landscape of modern businesses, optimizing workflows is crucial. Process mapping tools serve as the guiding compass, enabling organizations to visualize, streamline, and enhance their operational efficiencies.

From intricate software platforms to intuitive diagramming solutions, these tools empower teams to chart out complex processes, fostering clarity, collaboration, and continual improvement.

In this article, we will delve into the top 19 process mapping tools, exploring their functionalities and impact on driving organizational success.

We will cover:

- The list of tools

What is process mapping?

Why are process mapping tools important, features to look for in process mapping tools, process street.

Process Street offers a seamless platform for process mapping, aiding in visualizing workflows and procedures. Its intuitive interface allows step-by-step documentation, defining roles, and incorporating rich media. With its templates and collaborative features, teams can streamline operations, track progress, and improve efficiency across various industries and tasks.

Key features:

- Checklist automation: Create and automate dynamic checklists for recurring processes.

- Conditional logic : Customize workflows with conditional logic based on responses.

- Collaborative workflows: Facilitate team collaboration on tasks and processes.

- Integration capabilities: Seamlessly integrate with tons of apps and tools.

- Task assignment: Assign and track responsibilities within processes.

- Data collection: Gather and store data through forms within checklists.

- Reporting and analytics: Generate insights with analytics on process performance.

- Version control: Maintain and track versions of checklists for auditing.

- API access: Connect with other applications through APIs.

- Document storage: Attach and manage documents relevant to processes.

- Intuitive interface makes it user-friendly.

- Offers secure data handling and storage.

- It has a mobile application.

- Custom automated workflows can be made with AI .

- It has lots of premade workflow templates to choose from.

- There is no free plan.

Pricing: Process Street pricing page .

Canva simplifies process mapping by providing customizable templates, shapes, and design elements. Users can create visual process maps with ease, utilizing its drag-and-drop interface to illustrate workflows, add annotations, and integrate branding. Its collaborative features facilitate team input, making it versatile for mapping diverse procedures and workflows.

- Image library: Extensive collection of stock photos, icons, and illustrations.

- Brand kit: Stores brand assets for consistent branding.

- Social media integration: Direct sharing to social media platforms.

- Animations: Allows the creation of animated graphics and presentations.

- Print and digital designs: Supports designs for both online and print media.

- Offers various design templates for different purposes.

- Allows easy editing of elements, colors, and fonts.

- Supports sharing and teamwork on design projects.

- Provides basic features at no cost.

- Some templates lack customization options.

- There are limitations in the number of brand assets in the free version.

Pricing: Canva pricing page .

Nifty streamlines process mapping through its project management interface, enabling task breakdowns and timelines for visualizing workflows. Its collaborative boards, task dependencies, and customizable workflows empower teams to map processes, assign tasks, and track progress seamlessly. With integrations and a user-friendly layout, it aids in efficient process management.

- Task management: Create, assign, and organize tasks.

- Project milestones: Set and track project milestones.

- Collaborative workspaces: Shared spaces for team collaboration.

- Gantt charts: Visualize project timelines and dependencies.

- Team chat: Real-time communication within the platform.

- Offers a free version.

- Monitors time spent on tasks and projects.

- Syncs tasks and milestones with calendars.

- Offers pre-built templates for various project types.

- There are limited integration capabilities.

- It takes time to get team members used to it.

Pricing: Nifty pricing page .

Lucidchart is a versatile cloud-based platform known for its intuitive interface, enabling seamless creation of diagrams and visual representations. Renowned for collaborative capabilities, it facilitates team brainstorming and process mapping. Its adaptability across industries makes it a go-to choice for illustrating complex concepts and workflows.

- Shape libraries: Extensive libraries for symbols and shapes.

- Diagram creation: Tools for creating flowcharts, mind maps, org charts, etc.

- Presentation mode: Ability to present diagrams.

- Collaborative editing: Simultaneous editing by multiple users.

- Revision history: Track changes made to diagrams.

- Ability to link shapes and objects.

- Embed diagrams in websites or documents.

- Capability to work on diagrams offline.

- Ability to customize colors, fonts, and styles.

- There is an object limit in the free version.

Pricing: Lucidchart pricing page .

Miro , a collaborative online whiteboarding platform, is often used for product management. It offers visual tools for brainstorming, creating product roadmaps, and organizing user story maps. Miro enhances product development by enabling cross-functional teams to collaborate, ideate, and plan, fostering better communication and innovation in the product management process.

- Flowchart creation: Create and customize flowcharts.

- Innovation management: Keep track of ideas and documents.

- Product roadmap tools: Design project roadmaps with whiteboards.

- Project management: Use flow charts to design project management processes.

- It has a robust set of product features.

- Excellent for idea sharing.

- Supports video conferencing.

- It’s difficult to use with a trackpad.

Pricing: Miro pricing page .

MindMeister

MindMeister serves as an effective process mapping tool by offering a visual platform for brainstorming and organizing ideas. Its mind mapping capabilities enable users to outline workflows, connect processes, and illustrate relationships. Collaborative features empower teams to collectively design, refine, and visualize intricate processes for enhanced clarity and efficiency.

- Mind mapping: Creation of visual mind maps for brainstorming.

- Collaboration: Real-time collaboration for teams.

- Templates: Pre-built templates for various mind map types.

- Customizable styles: Options to customize map styles, colors, etc.

- Export/import: Capability to import/export mind maps in various formats.

- Offers the ability to present mind maps.

- Converts map items into actionable tasks.

- Adds notes and attachments to map items.

- Embeds maps in websites or documents.

- It’s not possible to increase the size of images.

Pricing: MindMeister pricing page .

Pipefy excels as a process mapping tool by offering customizable workflows within a visual interface. It enables mapping complex processes, automating tasks, and managing workflows efficiently. With its drag-and-drop system, forms, and automation, teams can visually map, optimize, and streamline their processes for improved productivity and clarity.

- Customizable workflows: Tailor processes to specific needs.

- Visual process mapping: Drag-and-drop interface for mapping workflows.

- Automation: Automate tasks and workflows.

- Forms and fields: Create customizable forms and fields.

- Task management: Track and manage tasks within processes.

- Offers pre-built templates for various workflows.

- Monitors and manages task deadlines.

- Allows users to attach and manage files within processes.

- The data analysis is poor.

- It’s not possible to create workflow approval processes.

Pricing: Pipefy pricing page .

ClickUp is a robust project management platform acclaimed for its versatility and customization. It streamlines workflows and fosters collaboration across teams. Known for its adaptability to various work styles, ClickUp offers a comprehensive solution for task management and team communication, enhancing productivity and project organization.

- Multiple views: Kanban boards, lists, calendars, and Gantt charts for varied project perspectives.

- Time tracking: Monitor time spent on tasks and projects.

- Team collaboration: Comments, mentions, and real-time collaboration on tasks.

- Goals and OKRs: Set and track objectives and key results.

- Document management: File attachments, document editing, and version control.

- Offers a wide range of features catering to diverse project manager needs.

- Allows for the creation of automated workflows, enhancing efficiency.

- Offers a free plan ideal freelancers and small businesses .

- Users have reported occasional lags or performance issues, especially with large data sets.

Pricing: ClickUp pricing page .

GitMind serves as a versatile process mapping tool, aiding in the creation of clear visual diagrams for workflows, decision trees, and organizational processes. Its user-friendly interface and collaborative features empower teams to brainstorm, plan, and structure complex processes, fostering transparency and coherence in project management and strategy development.

- Diagram creation: Tools for creating various diagram types.

- Templates: Pre-built templates for different diagramming needs.

- Customizable styles: Options to customize diagram styles, colors, etc.

- Allows simultaneous editing by multiple users.

- Offers options to share and publish diagrams.

- There are a lot of limitations in the free version.

Pricing: GitMind pricing page .

Creately empowers seamless visual collaboration, offering a dynamic platform for creating diverse diagrams and models. Renowned for its intuitive interface, it fosters team brainstorming, aiding in process mapping, flowcharting, and wireframing. With real-time collaboration, it enhances clarity and efficiency in project planning and problem-solving, elevating teamwork experiences.

- Diagram creation: Tools for creating various types of diagrams.

- Real-time collaboration: Simultaneous editing and collaboration.

- Templates: Pre-built templates for different diagram types.

- Integration: Compatibility with various apps and platforms.

- Tracks changes and versions of diagrams.

- Very affordable for different budgets.

- Allows the ability to embed diagrams in websites or documents.

- They don’t offer many tutorials on using the software.

Pricing: Creately pricing page .

Edraw stands as a versatile diagramming tool, renowned for its user-friendly interface and extensive template library. It empowers users to create a wide array of diagrams and visuals, aiding in process mapping, flowcharting, and organizational charts. Its intuitive design fosters efficient communication and visualization of complex concepts, enhancing productivity.

- Diagram creation: Tools for various types of diagrams.

- Access controls: Permissions and access settings.

- Notes and attachments: Add notes and attachments to diagrams.

- Offline access: Capability to work on diagrams offline.

- Search and filter: Search and filter capabilities within diagrams.

- Offers pre-built templates for different diagram needs.

- Has compatibility across different operating systems.

- Provides a drag-and-drop interface.

- The paid plans are a bit pricey.

- There’s no ability to combine templates.

Pricing: Edraw pricing page .

Visual Paradigm

Visual Paradigm stands as an advanced modeling tool, renowned for its comprehensive suite of modeling capabilities. It empowers users to create intricate diagrams and models, aiding in software development, system design, and business process analysis. With its intuitive interface, it fosters efficient visualization and planning of complex systems and workflows.

- Unified modeling language (UML) support: Tools for various UML diagrams.

- Diagram creation: Create diverse diagrams for software and systems.

- Business process modeling: Tools for business process analysis and modeling.

- Team collaboration: Real-time collaboration for multiple users.

- Requirement management: Capture and manage project requirements.

- Has features for Agile methodologies.

- Generates code from models and vice versa.

- Has prototyping and wireframing capabilities.

- There is no free version.

- It is not suitable for freelancers.

Pricing: Visual Paradigm pricing page .

Google Drawings

Google Drawings offers a simple yet effective platform for process mapping, enabling users to create visual diagrams and flowcharts. Its intuitive interface and basic shapes empower individuals to outline workflows, visualize processes, and illustrate relationships. Collaborative features allow team input, making it accessible for various mapping needs.

- Basic shapes: Tools for creating various shapes.

- Text editing: Capability to add and edit text.

- Lines and connectors: Tools for drawing lines and connectors.

- Image insertion: Ability to add and manipulate images.

- Color and fill options: Customizable color and fill settings.

- Has tools for freehand drawing.

- Has the capability to work with multiple layers.

- Gives users the ability to add comments and annotations.

- There are options to group and ungroup elements.

- There are no project management options.

Pricing: It’s free!

Gliffy serves as a robust process mapping tool, offering a user-friendly platform to create visual diagrams and flowcharts. Its intuitive interface and extensive shape library enable the seamless mapping of workflows, facilitating team collaboration. With its collaborative features and templates, it aids in illustrating and optimizing complex processes efficiently.

- Templates: Pre-built templates for different diagram needs.

- Customizable styles: Options to modify diagram styles, colors, etc.

- Drag-and-drop interface: Intuitive tools for easy diagram creation.

- Real-time collaboration: Simultaneous editing by multiple users.

- Capability to import/export diagrams in various formats.

- Has compatibility with various apps and platforms.

- Offers permissions and access settings.

- Provides features for managing teams and users.

- Users have to pay for each integration.

Pricing: Gliffy pricing page .

Cacoo functions as a versatile process mapping tool, providing a collaborative platform for creating visual diagrams and flowcharts. Its intuitive interface and extensive shape library empower users to map workflows, collaborate in real time, and illustrate complex processes. It facilitates team coordination and clarity in process visualization and optimization.

- Diagram embedding: Embed diagrams in websites or documents.

- Allows users to access and edit diagrams on mobile devices.

- Offers the capability to work on diagrams offline.

- Has collaboration features for comments and feedback.

- Usage is limited in certain plans.

Pricing: Cacoo pricing page .

Visme stands as a versatile visual content creation platform, offering intuitive tools to craft engaging presentations, infographics, and diagrams. Its user-friendly interface and diverse templates empower users to convey complex information effectively. Renowned for its design flexibility, it aids in creating impactful visuals for diverse communication needs.

- Presentation creation: Tools for creating interactive presentations.

- Infographic design: Features for designing infographics.

- Data visualization: Tools for presenting data visually.

- Templates: Pre-built templates for various visual content.

- Customizable designs: Options to customize styles, colors, etc.

- Has a diverse library of icons and images.

- Offers the ability to create interactive elements.

- Has tools for creating charts and graphs.

- It’s difficult to create custom measurements.

Pricing: Visme pricing page .

Draw.io functions as an intuitive and versatile diagramming tool, offering a robust platform for creating diverse diagrams and flowcharts. Renowned for its user-friendly interface and compatibility, it facilitates easy visualization and planning, aiding in illustrating complex ideas and processes effectively for various industries and tasks.

- Export/import: Capability to import/export diagrams in various formats.

- Has search and filter capabilities within diagrams.

- It lacks many features similar products have.

Google Docs