- DevOps Lifecycle

- DevOps Roadmap

- Docker Tutorial

- Kubernetes Tutorials

- Amazon Web Services [AWS] Tutorial

- AZURE Tutorials

- GCP Tutorials

- Docker Cheat sheet

- Kubernetes cheat sheet

- AWS interview questions

- Docker Interview Questions

- Ansible Interview Questions

- Jenkins Interview Questions

Google File System

Google Inc. developed the Google File System (GFS), a scalable distributed file system (DFS), to meet the company’s growing data processing needs. GFS offers fault tolerance, dependability, scalability, availability, and performance to big networks and connected nodes. GFS is made up of a number of storage systems constructed from inexpensive commodity hardware parts. The search engine, which creates enormous volumes of data that must be kept, is only one example of how it is customized to meet Google’s various data use and storage requirements.

The Google File System reduced hardware flaws while gains of commercially available servers.

GoogleFS is another name for GFS. It manages two types of data namely File metadata and File Data.

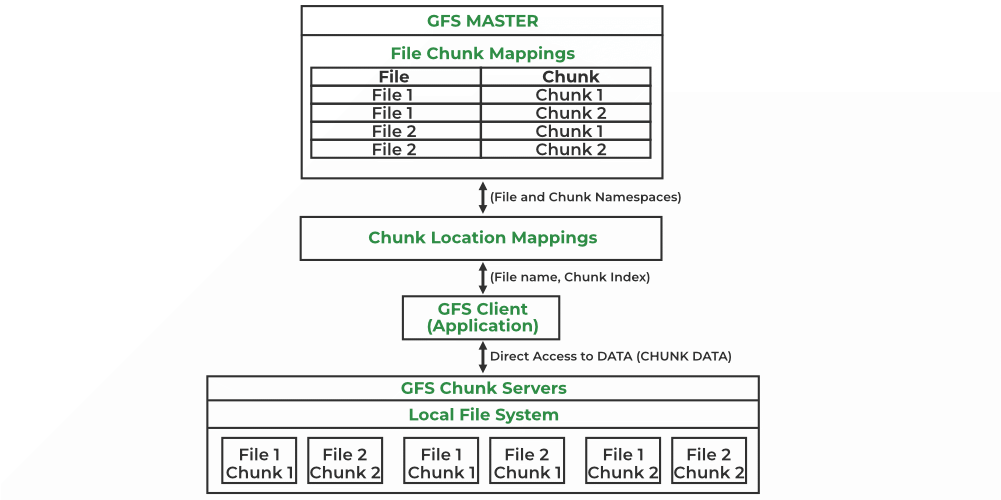

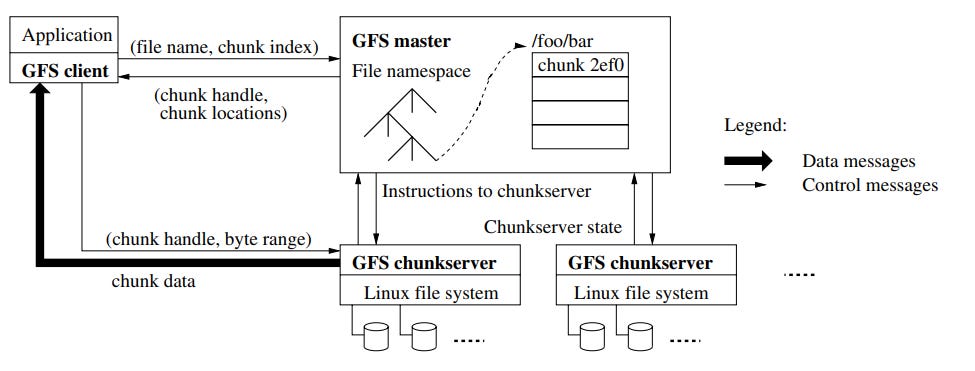

The GFS node cluster consists of a single master and several chunk servers that various client systems regularly access. On local discs, chunk servers keep data in the form of Linux files. Large (64 MB) pieces of the stored data are split up and replicated at least three times around the network. Reduced network overhead results from the greater chunk size.

Without hindering applications, GFS is made to meet Google’s huge cluster requirements. Hierarchical directories with path names are used to store files. The master is in charge of managing metadata, including namespace, access control, and mapping data. The master communicates with each chunk server by timed heartbeat messages and keeps track of its status updates.

More than 1,000 nodes with 300 TB of disc storage capacity make up the largest GFS clusters. This is available for constant access by hundreds of clients.

Components of GFS

A group of computers makes up GFS. A cluster is just a group of connected computers. There could be hundreds or even thousands of computers in each cluster. There are three basic entities included in any GFS cluster as follows:

- GFS Clients: They can be computer programs or applications which may be used to request files. Requests may be made to access and modify already-existing files or add new files to the system.

- GFS Master Server: It serves as the cluster’s coordinator. It preserves a record of the cluster’s actions in an operation log. Additionally, it keeps track of the data that describes chunks, or metadata. The chunks’ place in the overall file and which files they belong to are indicated by the metadata to the master server.

- GFS Chunk Servers: They are the GFS’s workhorses. They keep 64 MB-sized file chunks. The master server does not receive any chunks from the chunk servers. Instead, they directly deliver the client the desired chunks. The GFS makes numerous copies of each chunk and stores them on various chunk servers in order to assure stability; the default is three copies. Every replica is referred to as one.

Features of GFS

- Namespace management and locking.

- Fault tolerance.

- Reduced client and master interaction because of large chunk server size.

- High availability.

- Critical data replication.

- Automatic and efficient data recovery.

- High aggregate throughput.

Advantages of GFS

- High accessibility Data is still accessible even if a few nodes fail. (replication) Component failures are more common than not, as the saying goes.

- Excessive throughput. many nodes operating concurrently.

- Dependable storing. Data that has been corrupted can be found and duplicated.

Disadvantages of GFS

- Not the best fit for small files.

- Master may act as a bottleneck.

- unable to type at random.

- Suitable for procedures or data that are written once and only read (appended) later.

Please Login to comment...

Similar reads.

- Google Cloud Platform

- Distributed System

Improve your Coding Skills with Practice

What kind of Experience do you want to share?

Samuel Sorial's Blog

The Google File System - Case Study

10 min read

Table of contents

Introduction, assumptions, key points of architecture, metadata in master, consistency model, leases and mutations, namespace management, replica placement, garbage collection, stale replica detection, references:.

The Google file system's main goal is to support their applications' workload. Which affected their design decisions, they implemented what they actually need, rather than the de-facto distributed file system.

There are 4 main different decisions that they made in their design:

Failures are the default, not the exception. This means that the system is running assuming that some parts are down and won't come back alive again.

Files are huge, for a company like Google, it's more common to have GBs files rather than KBs files.

Access & Update Patterns: Files are read sequentially, not randomly. Also, it's more popular to update files by appending them.

Co-designing the application with the file system API gives huge flexibility to the design.

Note: Google doesn't use GFS anymore, as they invented another file system that they are using in their cloud.

The system is running on many commodity machines, which means that they fail, so they must detect and handle failures correctly. They store huge files and sometimes small files, but it's optimized for bigger ones.

Reads happen in two ways, large amounts of sequential data, and some random small data. It's optimized for the bigger ones, and it's left for the application developers to optimize their fetches of small data, by sorting and batching instead of going back and forth.

Write workload is typically huge data written to the end of the file, once a file is written, it's usually not edited. So, updating data randomly in given offsets is supported but not optimized.

As the main usage of it will be in the producer-consumer pattern, it's important to support synchronization ways to append files with the least amount of work.

Bandwidth is more important than latency, as the applications that process huge data don't pay attention to latency, instead, they look for high throughput of data.

While it share many operations with the standard API of file systems like POSIX, it had two additional important operations. Snapshot creates a copy of a file or directory in a low cost, and append that helps the clients to append concurrently to the same file without worrying about concurrency problems. It's very useful in the context of pub-sub applications, and multi-way merge results apps.

Architecture

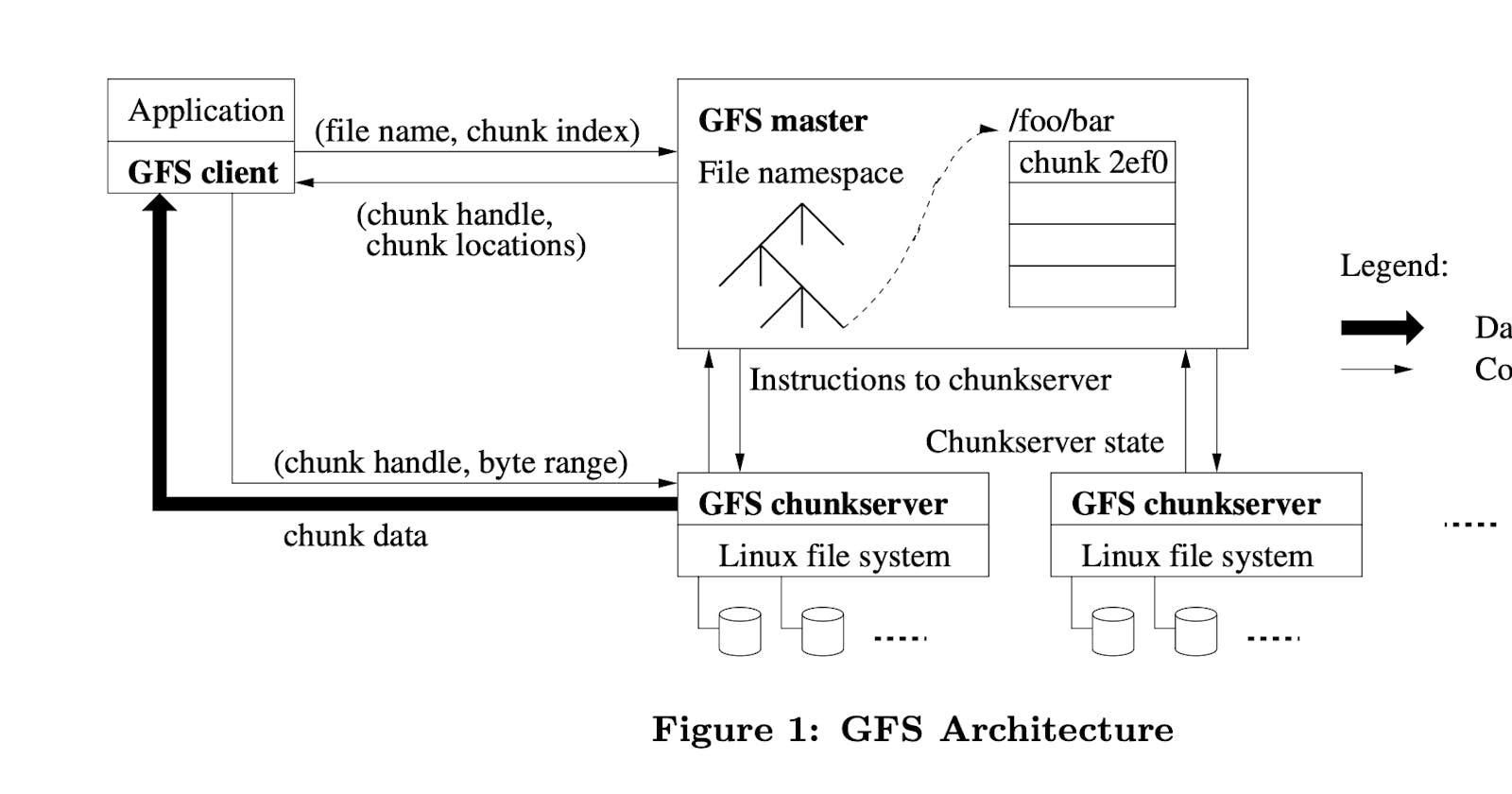

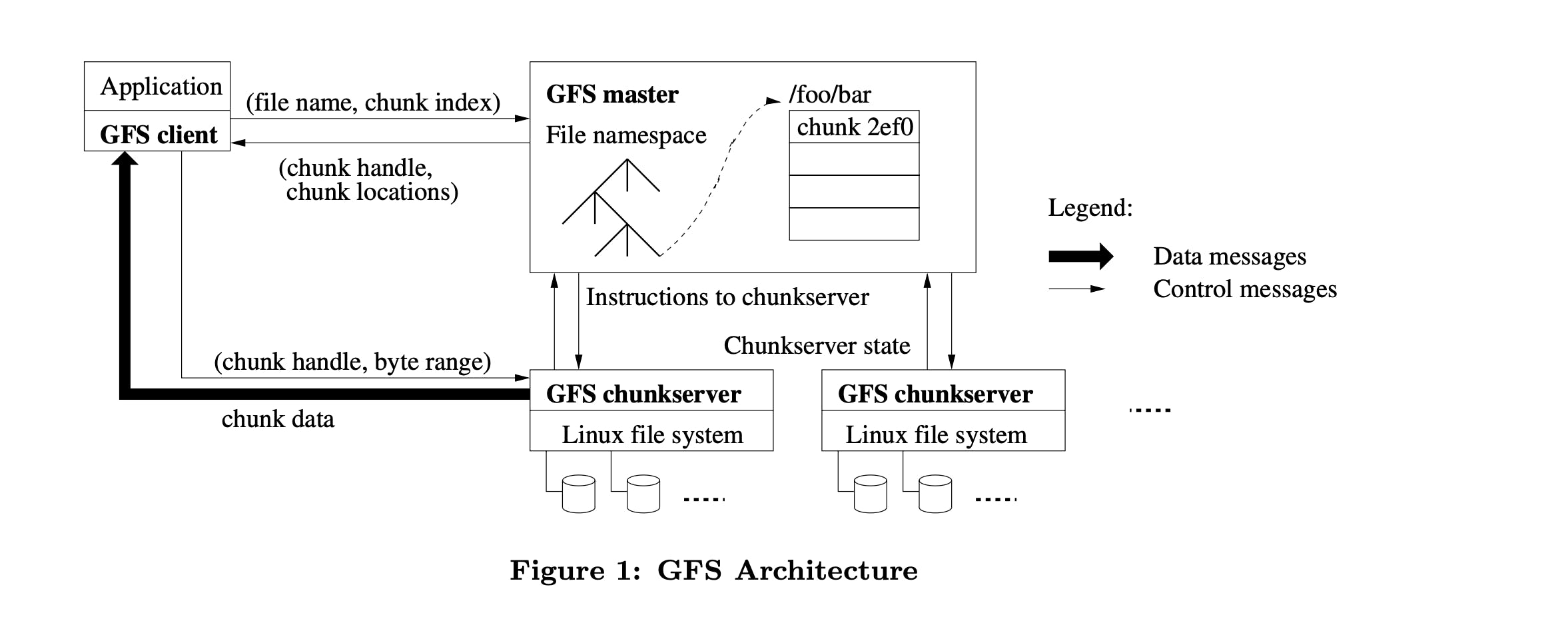

The cluster contains a single master and multiple chunkservers that are accessed by multiple clients. Each chunk server is a low-cost Linux machine, and it's possible to run both chunkserver and client on the same machine but pay attention to the flaky code of applications that may increase the chances of the server being down.

Each file is divided into many fixed-size chunks, each chunk is identified by a unique 64-bit chunk handle that got assigned to it by the master in the creation of this chunk. Chunks are replicated among different chunkservers to keep them reliable, it's replicated into 3 by default but users can change the replication settings.

The master contains only meta-data about chunks, it also controls operations like chunk lease management, garbage collection of orphaned chunks, chunk migration between servers, and most importantly communication with chunk servers with HeartBeats to give instructions and collect stats.

The cache is not used widely in GFS, as the file sizes are huge, and it's not efficient to keep caching huge files. It plays an important role in simplifying the design by eliminating the hassle of keeping track of cache coherence. However, sometimes client uses a cache to prevent multiple calls to master in order to know where the chunk is located, and also the underlying Linux buffer cache is already caching some of the highly accessed data in the server.

Single master: It simplifies the design, and enables the master to make decisions using global knowledge. But it can become a bottleneck, so it was decided to minimize the interaction between it and the client. The client never reads from a master, it only asks the master for the location of a specific chunk, and this data is cached in the client.

Chunk size: Having a 64 MB chunk size may have been problematic if it was not paired with lazy allocation, as it reduces fragmentation. Also, it reduces the client interactions with the master, reduces network usage because the client can utilize a single TCP connection to retrieve a bigger amount of data, lastly, it keeps the size of metadata in the master low. Conversely, small files become problematic, as the chunk servers storing some small files consisting of single chunks may become hot spots rapidly. So in this case it's better to have a higher replication factor for small files that may become hot spots.

The master node stores: chunks and files namespaces, the mapping of files to chunk handles, and chunk handles mapping to chunk servers that contain it. It's important to keep the namespaces and files to chunk handles persistent on disk, so it was decided to add them in the operation log along with the in-memory data structures. Keeping those data in memory can be limiting, but as it only stores 64 bytes per 64MB chunk, it was an acceptable choice.

The master doesn't keep a persistent log of chunk locations, it's more reasonable to ask each chunk server about which chunks it contains in the master node restart. Making the chunk server single point of truth about which chunks it contains because it's the only one that can determine if it has a specific chunk or not.

Keeping an operation log is critical to preserve important data changes like the creation of chunks, or appending. It's also playing a huge part in keeping data consistent even on failures, by flushing logs to disk before responding to the client, with adding another layer of replication for the log, data losses become less. Checkpoints are made during execution, it's algorithm is designed to keep the master serving while the checkpoint is being taken.

GFS has a somehow relaxed consistency model that aims to serve the needs of the applications that use it. It handles namespace and chunk creation atomically as there is only one master node, with an operation log that flushes data to disk to preserve them in case of failures. There are 3 different states for file regions that exist in GFS after mutation:

Consistent : This means that reading the same region from all replicas will give the same data.

Defined: It's consistent and gives the whole data that was written in the latest successful mutation.

Inconsistent : Mutation failed to change data over the different replicas, so it becomes undefined for a while until the master resolves it.

GFS applications accommodate this relaxed model by implementing some techniques to keep sure it fits their needs such as using append instead of overwrite, checkpointing, and writing self-validating records with checksums inside it if needed. Record append is at-least-once, which means that it may duplicate data on some replicas, if it's not accepted in the application level, adding unique ids to regions appended can help applications to detect such duplications.

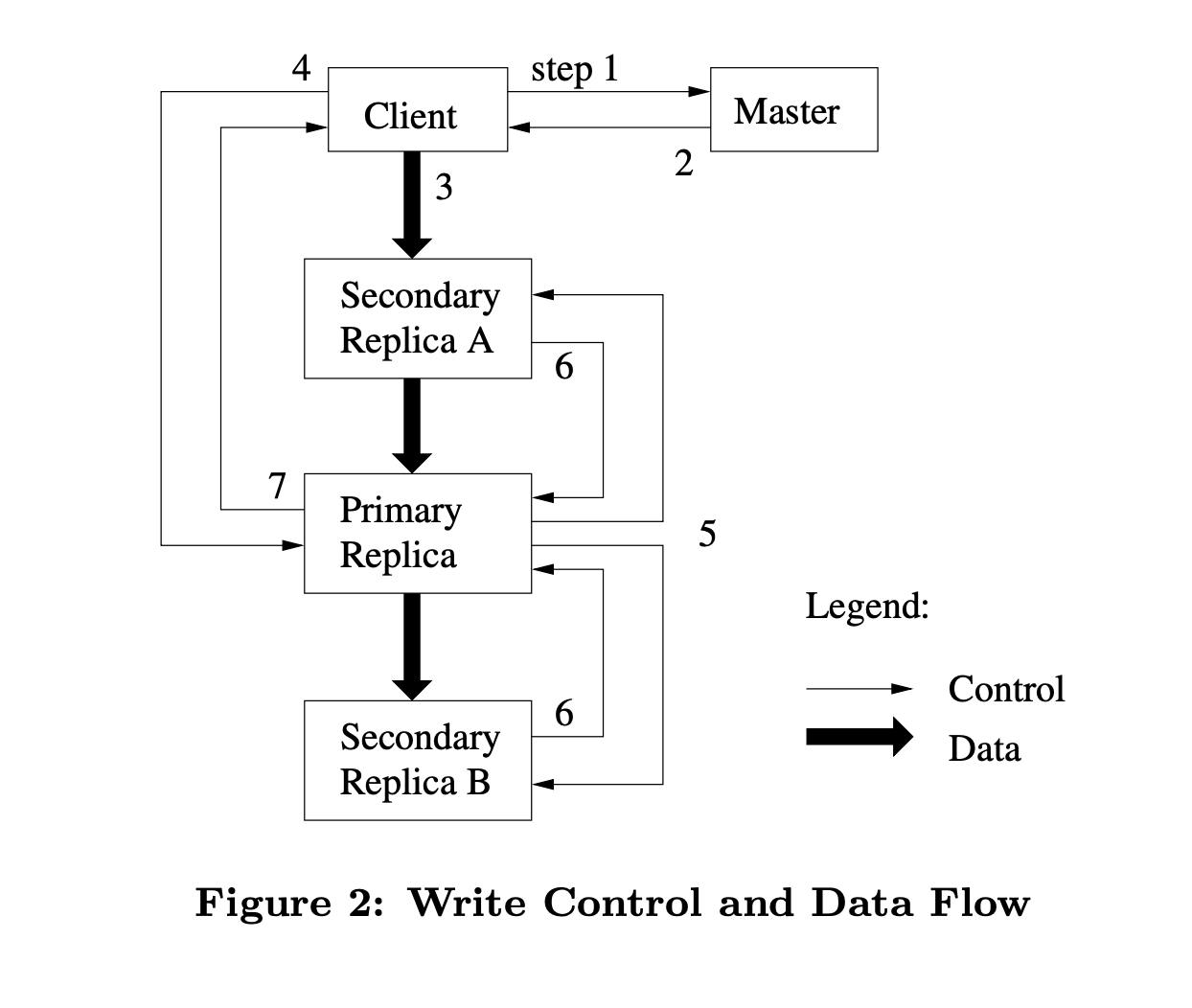

System Interactions

For each chunk handle, the master chooses a server that is responsible for organizing data mutations on this chunk, it's called primary. It grants it a lease with expiration after 60 seconds, after that, it chooses another one or gives the primary additional time if it has requested that piggybacking heartbeat request. It's important to note that the primary knows the expiration of the lease, and it refuses any mutations after that expiry. So if the master is dead, and the client is already changing something, it will stop at the expiration. The master can then choose another primary when it comes back.

Mutation steps:

The client asks the master for the primary chunk to be modified.

The master replies with the primary and secondaries holding replicas.

Client forward data (without mutation operation) to nearest node.

The client sends the operations it needs to primary.

Primary decides the order of operations and sends it to other replicas.

Replicas respond to primary if it's successfully applied the same operations in the same order.

Primary replies to clients with success.

Decoupling data flow from control flow is an intelligent decision to maximize the utilization of the network. It starts by choosing the nearest node to push data into it, which starts pipelining data to other nodes from the first byte it receives, making the best usage of TCP connections. Later when data is fully received on all nodes, the client can start sending commands to specify what operations it needs to be done with this data.

Master Operations

In order to support snapshotting, the master needs to utilize locks to prevent having incorrect data. Hence, it's important to design lock granularity carefully to reduce the waiting time for such operations. For example, appending to a file requires taking read locks on all directory paths that contain this file, and a write on that specific one. By following this scheme, it allows different locks to be taken on files in the same directory. Also, it prevents deadlocks from happening as locks are taken in a consistent order, from top to bottom, and lexicographically ordered on the file level.

Spreading data in different replicas is not enough to ensure that it's available and makes the best utilization of the network, it's also important to pick replicas such that data is replicated within different racks. This ensures that if something happens to a specific rack, data is not lost. It also has the advantage that reading data can make us of having it replicated on different racks, but it also has the drawback that mutation flow is passing through different racks, which is acceptable in this case.

When a file is deleted, the master logs the deletion but renames the file to a hidden name that includes the deletion timestamp. The file isn't physically removed until a regular scan of the file system namespace, which happens every three days (configurable). During this period, the file can be read under its new name or even undeleted. When finally removed, its in-memory metadata is erased, disconnecting it from all its chunks.

In a similar way, the master scans for orphaned chunks (those not associated with any file) and erases their metadata. Each chunk server reports a subset of its chunks during regular HeartBeat messages exchange with the master, which in turn instructs the chunk server which chunks to delete based on its metadata records.

This garbage collection approach simplifies storage reclamation in a large-scale distributed system, especially where component failures are common. It allows for the efficient cleanup of unnecessary replicas and merges storage reclamation into regular background activities, making it more reliable and cost-effective. This approach also provides a safety net against accidental irreversible deletion.

However, the delay in storage reclamation can be a disadvantage when fine-tuning usage in tight storage situations or when applications frequently create and delete temporary files. To address this, GFS allows for expedited storage reclamation if a deleted file is explicitly deleted again. It also offers users the ability to apply different replication and reclamation policies to different parts of the namespace.

chunk replicas can become stale if a chunkserver fails and misses updates to the chunk during its downtime. To manage this, the master maintains a chunk version number to differentiate between up-to-date and stale replicas.

Whenever the master grants a new lease on a chunk, it increases the chunk's version number and notifies the up-to-date replicas. Both the master and these replicas record the new version number in their persistent state before any client is informed, hence before any writing to the chunk can commence. If a replica is unavailable at this time, its version number remains unchanged. The master identifies stale replicas when the chunkserver restarts and reports its chunk and version numbers. If the master notices a version number higher than its record, it assumes its previous attempt to grant the lease failed, and considers the higher version as up-to-date.

The master removes stale replicas during its regular garbage collection, effectively treating them as non-existent when responding to client requests for chunk information. As an additional precaution, the master includes the chunk version number when informing clients about which chunkserver holds a lease on a chunk, or when it instructs a chunkserver to read the chunk from another chunkserver during a cloning operation. The client or chunkserver verifies this version number during operations, ensuring that it always accesses up-to-date data.

This case study is created after careful reading of the GFS paper written by Google engineers, if you think there's anything wrong please contact me.

- Ghemawat, S., Gobioff, H., & Leung, S. T. (2003). The Google File System. In Proceedings of the nineteenth ACM symposium on Operating systems principles - SOSP '03 (pp. 29-43). ACM Press. doi.org/10.1145/945445.945450

The Polymathic Engineer

Google File System

A distributed storage case study: the google file system..

Hi Friends,

Welcome to the 78th issue of the Polymathic Engineer newsletter.

This week, we will discuss an interesting case study for distributed storage: the Google File System.

The outline will be as follows:

Introduction

Design Assumptions

Architecture

Architectural Considerations

Data Integrity

The Google File System (GFS) was designed and introduced in the early 2000s to meet the unique needs of Google’s extensive data processing requirements.

During that period, Google rapidly expanded its infrastructure to support various web services, including search, indexing, and analytics.

The existing file systems could not handle the scale and performance demands, leading Google to create GFS to meet its specific needs.

GFS was designed to provide high scalability and fault tolerance, ensuring data is stored reliably and accessed quickly, even if hardware failures and network issues occur.

Even if Google replaced it with a more advanced file system called Colossus, GFS significantly impacted the development of other distributed file systems.

For example, the Hadoop Distributed File System (HDFS), a key component of the Apache Hadoop project, was directly inspired by GFS. HDFS adopted many core principles of GFS, such as its distributed architecture, fault tolerance, and scalability, and became widely used for big data processing in the open-source community.

In the following sections, we'll delve into the most critical aspects of GFS.

GFS Design Goals

The goal of supporting Google's application workload affected several crucial design decisions. Here are some key assumptions:

Failures are the Default, Not the Exception: GFS assumes that component failures (e.g., disk failures, network partitions, server crashes) are common and must be managed automatically without human intervention.

Handling Large Files: Google typically works with large files, often several gigabytes. This influenced GFS to optimize for large files rather than small ones.

Access and Update Patterns: GFS is optimized for sequential reads and appends rather than random reads and writes. Most updates are performed by appending data, which aligns with Google’s data processing patterns.

GFS Architecture

GFS employs a distributed architecture composed of multiple nodes organized into a cluster.

There are three primary types of nodes in a GFS cluster:

Master Node : The master node acts as the central control unit of the GFS cluster. It maintains metadata about the file system, including the namespace and access control information. Files in GFS are divided into fixed-size chunks, identified by a unique 64-bit handler. The master assigns the handler to each chunk and keeps the files mapped to chunks. All metadata are stored in memory, but namespaces and file-to-chunk mapping are also persisted by logging the mutations to an operation log. This log is stored on the master’s disk and replicated on remote machines, making it possible to restore the master state simply and reliably in case of crashes.

Chunk Servers : These are the storage nodes where the data is stored. Each chunk server stores data in fixed-size chunks (typically 64MB), and each chunk is replicated across multiple chunk servers to ensure fault tolerance. Chunk servers regularly communicate with the master to report their status and the chunks they hold. GFS can scale horizontally by adding more chunk servers to the cluster if necessary.

This post is for paid subscribers

- operating-systems

- paper-summary

Original paper by Sanjay Ghemawat, Howard Gobioff, and Shun-Tak Leung: https://static.googleusercontent.com/media/research.google.com/pt-BR//archive/gfs-sosp2003.pdf

When building a distributed file system there are many concerns to take into consideration: components failures (disk, memory, networking, power supply, and so on), concurrent file operations, bandwidth, latency, and many others. This paper presents the scalable, distributed file system built for meeting Google’s storage needs: GFS. Despite sharing some of the same goals of previous distributed file systems, GFS is designed with the assumption that components will fail, files are huge by default (multi-GB files are common), and that most files can be mutated by appending new data rather than overwriting existing data.

Based on these assumptions the Google File System architecture consists of a single master node and multiple chunk servers that stores the chunks of files in its local disks. The multiple clients of GFS interact only with the master node, which stores only the metadata related to the requested files and responds to clients which chunk servers the client should contact. This separation between write control and data flow reduces the chances of creating a system bottleneck in the master node and eliminates the necessity of synchronizing the master node with the chunk servers simplifying the overall system design.

To evaluate the performance of GFS, the authors executed micro-benchmarks on a cluster with one master, 16 chunk servers, and 16 clients. These benchmarks helped the authors to detect slow write operations and check how the system behaved with concurrent record append operations. The paper also demonstrates the GFS performance in a real-world google cluster, demonstrating the aggregate throughput showing graphs with read, write and append operation rate compared with the network bandwidth limits imposed by the cluster topology.

Some of the related work already proposed some ideas to increase fault tolerance in distributed file systems, but they differ from GFS mainly regarding the caching strategy (which GFS doesn’t use at all) and the centralized approach for controlling chunk placement and replication policies.

The paper offers a great contribution by describing how GFS supports large-scale data processing workloads implementing techniques for data replication, data corruption detection, and high aggregate data throughput separating file system control from data transfer. This system is an essential component of Google’s overall platform and the lessons learned can be definitely applied by others who aim to implement distributed file systems.

Stop Thinking, Just Do!

Sungsoo Kim's Blog

Tags Categories Archive

Sung-Soo Kim's Blog

The google file system (gfs).

- design patterns 14

- hadoop & mapreduce 168

29 April 2014

Article source.

- Title: DISTRIBUTED SYSTEMS Concepts and Design Fifth Edition

- Authors: George Coulouris, Jean Dollimore, Tim Kindberg and Gordon Blair

Chapter 12 presented a detailed study of the topic of distributed file systems, analyzing their requirements and their overall architecture and examining two case studies in detail, namely NFS and AFS. These file systems are general-purpose distributed file systems offering file and directory abstractions to a wide variety of applications in and across organizations. The Google File System (GFS) is also a distributed file system; it offers similar abstractions but is specialized for the very particular requirements that Google has in terms of storage and access to very large quantities of data [Ghemawat et al. 2003]. These requirements led to very different design decisions from those made in NFS and AFS (and indeed other distributed file systems), as we will see below. We start our discussion of GFS by examining the particular requirements identified by Google.

GFS requirements

The overall goal of GFS is to meet the demanding and rapidly growing needs of Google’s search engine and the range of other web applications offered by the company. From an understanding of this particular domain of operation, Google identified the following requirements for GFS (see Ghemawat et al. [2003]):

The first requirement is that GFS must run reliably on the physical architecture discussed in Section 21.3.1 – that is a very large system built from commodity hardware. The designers of GFS started with the assumption that components will fail (not just hardware components but also software components) and that the design must be sufficiently tolerant of such failures to enable application-level services to continue their operation in the face of any likely combination of failure conditions.

GFS is optimized for the patterns of usage within Google, both in terms of the types of files stored and the patterns of access to those files. The number of files stored in GFS is not huge in comparison with other systems, but the files tend to be massive. For example, Ghemawat et al. [2003] report the need for perhaps one million files averaging 100 megabytes in size, but with some files in the gigabyte range. The patterns of access are also atypical of file systems in general. Accesses are dominated by sequential reads through large files and sequential writes that append data to files, and GFS is very much tailored towards this style of access. Small, random reads and writes do occur (the latter very rarely) and are supported, but the system is not optimized for such cases. These file patterns are influenced, for example, by the storage of many web pages sequentially in single files that are scanned by a variety of data analysis programs. The level of concurrent access is also high in Google, with large numbers of concurrent appends being particularly prevalent, often accompanied by concurrent reads.

GFS must meet all the requirements for the Google infrastructure as a whole; that is, it must scale (particularly in terms of volume of data and number of clients), it must be reliable in spite of the assumption about failures noted above, it must perform well and it must be open in that it should support the development of new web applications. In terms of performance and given the types of data file stored, the system is optimized for high and sustained throughput in reading data, and this is prioritized over latency. This is not to say that latency is unimportant, rather, that this particular component (GFS) needs to be optimized for high-performance reading and appending of large volumes of data for the correct operation of the system as a whole.

These requirements are markedly different from those for NFS and AFS (for example), which must store large numbers of often small files and where random reads and writes are commonplace. These distinctions lead to the very particular design decisions discussed below.

GFS interface

GFS provides a conventional file system interface offering a hierarchical namespace with individual files identified by pathnames. Although the file system does not provide full POSIX compatibility, many of the operations will be familiar to users of such file systems (see, for example, Figure 12.4 [1]):

create – create a new instance of a file;

delete – delete an instance of a file;

open – open a named file and return a handle;

close – close a given file specified by a handle;

read – read data from a specified file;

write – write data to a specified file.

It can be seen that main GFS operations are very similar to those for the flat file service described in Chapter 12 (see Figure 12.6 [1]). We should assume that the GFS read and write operations take a parameter specifying a starting offset within the file, as is the case for the flat file service.

The API also offers two more specialized operations, snapshot and record append . The former operation provides an efficient mechanism to make a copy of a particular file or directory tree structure. The latter supports the common access pattern mentioned above whereby multiple clients carry out concurrent appends to a given file.

GFS architecture

The most influential design choice in GFS is the storage of files in fixed-size chunks , where each chunk is 64 megabytes in size. This is quite large compared to other file system designs. At one level this simply reflects the size of the files stored in GFS. At another level, this decision is crucial to providing highly efficient sequential reads and appends of large amounts of data. We return to this point below, once we have discussed more details of the GFS architecture.

Given this design choice, the job of GFS is to provide a mapping from files to chunks and then to support standard operations on files, mapping down to operations on individual chunks. This is achieved with the architecture shown in Figure 21.9, which shows an instance of a GFS file system as it maps onto a given physical cluster. Each GFS cluster has a single master and multiple chunkservers (typically on the order of hundreds), which together provide a file service to large numbers of clients concurrently accessing the data.

The role of the master is to manage metadata about the file system defining the namespace for files, access control information and the mapping of each particular file to the associated set of chunks. In addition, all chunks are replicated (by default on three independent chunkservers, but the level of replication can be specified by the programmer). The location of the replicas is maintained in the master. Replication is important in GFS to provide the necessary reliability in the event of (expected) hardware and software failures. This is in contrast to NFS and AFS, which do not provide replication with updates (see Chapter 12 [1]).

The key metadata is stored persistently in an operation log that supports recovery in the event of crashes (again enhancing reliability). In particular, all the information mentioned above is logged apart from the location of replicas (the latter is recovered by polling chunkservers and asking them what replicas they currently store).

Although the master is centralized, and hence a single point of failure, the operations log is replicated on several remote machines, so the master can be readily restored on failure. The benefit of having such a single, centralized master is that it has a global view of the file system and hence it can make optimum management decisions, for example related to chunk placement. This scheme is also simpler to implement, allowing Google to develop GFS in a relatively short period of time. McKusick and Quinlan [2010] present the rationale for this rather unusual design choice.

When clients need to access data starting from a particular byte offset within a file, the GFS client library will first translate this to a file name and chunk index pair (easily computed given the fixed size of chunks). This is then sent to the master in the form of an RPC request (using protocol buffers). The master replies with the appropriate chunk identifier and location of the replicas, and this information is cached in the client and used subsequently to access the data by direct RPC invocation to one of the replicated chunkservers. In this way, the master is involved at the start and is then completely out of the loop, implementing a separation of control and data flows – a separation that is crucial to maintaining high performance of file accesses. Combined with the large chunk size, this implies that, once a chunk has been identified and located, the 64 megabytes can then be read as fast as the file server and network will allow without any other interactions with the master until another chunk needs to be accessed. Hence interactions with the master are minimized and throughput optimized. The same argument applies to sequential appends.

Note that one further repercussion of the large chunk size is that GFS maintains proportionally less metadata (if a chunk size of 64 kilobytes was adopted, for example, the volume of metadata would increase by a factor of 1,000). This in turn implies that GFS masters can generally maintain all their metadata in main memory (but see below), thus significantly decreasing the latency for control operations.

As the system has grown in usage, problems have emerged with the centralized master scheme:

Despite the separation of control and data flow and the performance optimization of the master, it is emerging as a bottleneck in the design.

Despite the reduced amount of metadata stemming from the large chunk size, the amount of metadata stored by each master is increasing to a level where it is difficult to actually keep all metadata in main memory.

For these reasons, Google is now working on a new design featuring a distributed master solution.

As we saw in Chapter12, caching often plays a crucial role in the performance and scalability of a file system (see also the more general discussion on caching in Section 2.3.1). Interestingly, GFS does not make heavy use of caching. As mentioned above, information about the locations of chunks is cached at clients when first accessed, to minimize interactions with the master. Apart from that, no other client caching is used. In particular, GFS clients do not cache file data. Given the fact that most accesses involve sequential streaming, for example reading through web content to produce the required inverted index, such caches would contribute little to the performance of the system. Furthermore, by limiting caching at clients, GFS also avoids the need for cache coherency protocols.

GFS also does not provide any particular strategy for server-side caching (that is, on chunkservers) rather relying on the buffer cache in Linux to maintain frequently accessed data in memory.

GFS is a key example of the use of logging in Google to support debugging and performance analysis. In particular, GFS servers all maintain extensive diagnostic logs that store significant server events and all RPC requests and replies. These logs are monitored continually and used in the event of system problems to identify the underlying causes.

Managing consistency in GFS

Given that chunks are replicated in GFS, it is important to maintain the consistency of replicas in the face of operations that alter the data – that is, the write and record append operations. GFS provides an approach for consistency management that:

maintains the previously mentioned separation between control and data and hence allows high-performance updates to data with minimal involvement of masters;

provides a relaxed form of consistency recognizing, for example, the particular semantics offered by record append .

The approach proceeds as follows.

When a mutation (i.e., a write , append or delete operation) is requested for a chunk, the master grants a chunk lease to one of the replicas, which is then designated as the primary . This primary is responsible for providing a serial order for all the currently pending concurrent mutations to that chunk. A global ordering is thus provided by the ordering of the chunk leases combined with the order determined by that primary. In particular, the lease permits the primary to make mutations on its local copies and to control the order of the mutations at the secondary copies; another primary will then be granted the lease, and so on.

The steps involved in mutations are therefore as follows (slightly simplified):

On receiving a request from a client, the master grants a lease to one of the replicas (the primary) and returns the identity of the primary and other (secondary) replicas to the client.

The client sends all data to the replicas, and this is stored temporarily in a buffer cache and not written until further instruction (again, maintaining a separation of control flow from data flow coupled with a lightweight control regime based on leases).

Once all the replicas have acknowledged receipt of this data, the client sends a write request to the primary; the primary then determines a serial order for concurrent requests and applies updates in this order at the primary site.

The primary requests that the same mutations in the same order are carried out at secondary replicas and the secondary replicas send back an acknowledgement when the mutations have succeeded’

If all acknowledgements are received, the primary reports success back to the client; otherwise, a failure is reported indicating that the mutation succeeded at the primary and at some but not all of the replicas. This is treated as a failure and leaves the replicas in an inconsistent state. GFS attempts to overcome this failure by retrying the failed mutations. In the worst case, this will not succeed and therefore consistency is not guaranteed by the approach.

It is interesting to relate this scheme to the techniques for replication discussed in Chapter 18. GFS adopts a passive replication architecture with an important twist. In passive replication, updates are sent to the primary and the primary is then responsible for sending out subsequent updates to the backup servers and ensuring they are coordinated. In GFS, the client sends data to all the replicas but the request goes to the primary, which is then responsible for scheduling the actual mutations (the separation between data flow and control flow mentioned above). This allows the transmission of large quantities of data to be optimized independently of the control flow.

In mutations, there is an important distinction between write and record append operations. writes specify an offset at which mutations should occur, whereas record appends do not (the mutations are applied at the end of the file wherever this might be at a given point in time). In the former case the location is predetermined, whereas in the latter case the system decides. Concurrent writes to the same location are not serializable and may result in corrupted regions of the file. With record append operations, GFS guarantees the append will happen at least once and atomically (that is, as a contiguous sequence of bytes); the system does not guarantee, though, that all copies of the chunk will be identical (some may have duplicate data). Again, it is helpful to relate this to the material in Chapter 18 [1]. The replication strategies in Chapter 18 are all general-purpose, whereas this strategy is domain-specific and weakens the consistency guarantees, knowing the resultant semantics can be tolerated by Google applications and services (a further example of domain-specific replication – the replication algorithm by Xu and Liskov [1989] for tuple spaces can be found in Section 6.5.2 [1]).

[1] George Coulouris, Jean Dollimore, Tim Kindberg and Gordon Blair, DISTRIBUTED SYSTEMS Concepts and Design Fifth Edition, Pearson Education, Inc., 2012.

Sungsoo Kim Principal Research Scientist [email protected]

about me sungsoo's scoop sungsoo's facebook

CS 736 Reviews - Spring 2016

« FlashTier: A Lightweight, Consistent and Durable Storage Cache | Main | Optimistic Crash Consistency »

The Google File System

The Google File System . Sanjay Ghemawat, Howard Gobioff, and Shun-Tak Leung. In 19th ACM Symposium on Operating Systems Principles, Lake George, NY, October, 2003.

Posted by Michael Swift on March 29, 2016 08:16 AM | Permalink

1.Summary This paper describes the Google File system that is widely deployed within Google as a storage platform for the generation and processing of high volume of data used by Google services as well as research. It allows storage of hundreds of terabytes of data storage across thousands of disks on over a thousand commodity machines and can be concurrently accessed by hundreds of clients.

2.Problem Typically distributed file systems aim for scalability, reliability and availability. However at the time of this publication, conventional systems posed limitations and challenges that led to GFS design: * Component failures are norm rather than exception * Files are huge by traditional standards. Multi GB files are norm. * Most files are mutated by appending new data rather than overwriting existing data. Random writes are very rare. * High sustained bandwidth is more important than low latency.

3.Contributions The main contribution of the paper is the file system that can scale to a level unforeseen ever before, running on commodity hardware with built in monitoring, fault tolerance and automatic recovery. The paper illustrates the design and evaluation of the implementation of GFS with details on tackling the problems mentioned above. Some of the highlights of the architecture are: * single master: maintain metadata info, name space, ACL * many chunks server: store chunks of file as normal file of local fs * chunks are replicated across the system * crash recovery: during mutation, if one of server crashes, client retries and if master crashes, replay the log, and wait for chunk server to checkin, to rebuild the mapping

4.Evaluation The authors provide few micro benchmarks to illustrate the bottlenecks inherent in GFS architecture and implementation. The read rate gets 75-80% of the theoretical limits (set by the network bandwidth) and the write reaches about half of the limit. Further, several clients can simultaneously append file at a reasonably high rate (only to be bottlenecked by the network congestion). This shows the high sustained bandwidth the authors aim to achieve with the system. The authors also present data on the overhead at the master, distribution of workload at the chunkserver and and also on the recovery time after crash and show the scalability, fault tolerance and the automatic recovery aspect of the system. Overall, the authors measure and report important aspects of the design. It would have been useful if they did a comparison with another system (system in Google before GFS) and report how it is better or worse in different aspects (read / write rate vs number of machine, disk size etc).

5.Confusion What happens when Master fails? It seems like a single point of failure. Also How is consistency maintained across replicas? (i.e. what if replica has different length?)

Posted by: Udip | March 31, 2016 10:07 AM

Summary : This paper describes the design and implementation of the Google File System(GFS) which is a scalable distributed file system used for large distributed data-intensive applications. It provides fault tolerance, ability to run on inexpensive commodity hardware and high aggregate performance. The deviation of the nature of application workloads and technological environments observed in Google’s applications workloads from the earlier file system assumptions have driven many design decisions in GFS. GFS successfully fulfilled the storage needs of Google and was used for generating and processing data across multiple disks, machines concurrently accessed by many clients thereby providing hundreds of terabytes of storage. The paper also reports empirical data by evaluating GFS using micro-benchmarks and real world scenarios.

Problem : Google generates and processes large amounts data(files sizes in the range of gigabytes were common). a) Traditional file systems were not suitable for this large scale of storage as consistency wasn’t guaranteed in the case of multiple concurrent updates and use of locks for this would hinder scalability. b) The use of inexpensive commodity hardware for storage renders the system vulnerable to component failures frequently. GFS incorporated constant monitoring, error detection, fault tolerance and automatic recovery to address failures. c) In variety of data(large repositories, data streams, archival data) files are mutated mostly by appending new data than overwriting. Given this access pattern GFS aimed at optimizing appending performance and guaranteeing atomicity. d) GFS increased flexibility by co-designing the applications and file system API benefits.

Contributions : a) Simplified, new and relaxed design for mutations(file changes) involves a single master (makes global placement decisions, creates new chunks and replicas, coordinates system wide activities, balances load across chunkservers, reclaims unused storage, access control information and stores chunk metadata). However it is notable how GFS overcomes master being the bottleneck by keeping everything in memory, master involves only metadata operation and client caches(not data and with timeout) information reducing master-client interaction(only for metadata). b) Files divided into fixed size chunks and replicated on chunkservers. Each chunk has 3 replicas which improves read performance and reliability, but replication can be out of sync and incurs space overhead. Actual data read/write is served by chunkserver. c) Writes are pipelined and this is useful as it enables full utilization of machine’s bandwidth, avoids network bottlenecks and high latency links and it also minimizes latency by pipelining data over TCP connections and chunkserver immediately forwards data it receives. d) Consistency model - Files region can be consistent(all clients see same data regardless of the replica they are reading from) and defined(consistent and write updates are intact). Multiple concurrent writes can leave a file region in undefined state. GFS overcomes this by using record append operation. e) Large chunks are allocated using lazy allocation to reduce internal fragmentation. However problem of ‘hot spot’ can arise in case of small files consisting few chunks and many client accessing the file. f) In addition to storing master metadata in memory it is also stored on operation logs which helps in recovery by loading to a checkpoint and replaying log after that. Checkpoints are stored as compact B-trees and help keep log size in check. g) Lease is granted by master to primary replica - ensures operation ordering by adhering to lease grant number first and then serial numbers within each replica. h) Lazy deletion - when a file is deleted, it is logged immediately, name changed to hidden name with a timestamp, physical space lazily reclaimed by piggybacking deletion messages on HeartBeat messages. This is my opinion is good as it can be done in the background, is simple and useful in case of accidental failure. i)Data Integrity is ensured by maintaining and checking for checksum computed. j) Copy-on-Write is used for snapshots. However few shortcomings in the above design aspects is that master continues to be single point of failure and is limited by its memory capacity.

Evaluation : The authors evaluate the GFS using micro-benchmarks and real time workloads. They initially evaluated by using single master, 2 masters, 16 chunkservers and 16 clients. Aggregate read bandwidth was shown to scale well when client numbers were increased and the client performance showed negligible decrease. Similar trend was observed in case of aggregate writes also but client performance showed a more marked decrease owing to the greater contention for multiple updates on replicas. The GFS was then evaluated on research and development and data processing real time workloads. Memory consumption by metadata in master and chunkserver was minimal about 50MB to 100MB owing to the highly optimized in-memory data structures. GFS also helped to achieve desirable recovery time in the range of 24 minutes to restore 600GB of data replicated across chunkserver in case of a chunkserver failure. It is wise design choice to offload caching of data from client and chunkserver as the underlying Linux buffer cache anyways handles the data caching. Memory usage has also been optimized by using smart data structures such as smart B-trees for checkpoint etc. The authors also finally analyse the real time workloads and their characteristics to show how the GFS’s design aspects conform the the requirements. However it would have been more useful if the above empirical data would have been presented in comparison with measurements on other prevalent file systems instead of presenting absolute measurements as it would have helped analyze how GFS compares to other file systems.

Confusion : 1) When is it necessary for master to access a file with its hidden name once it has been deleted? 2)Why and how is it sufficient to check only checksum of first and last blocks to ensure data integrity during overwrite operation? 3)How does lazy space allocation and padding in case of record append coexist(don’t the two mechanisms contradict with each other) ?

Posted by: Shruthi Racha | March 31, 2016 09:09 AM

Summary The paper describes the Google File System (GFS), a distributed file system that uses inexpensive commodity hardware to reliably store data over the network in a fault-tolerant way and access it efficiently to achieve high execution throughput for processing large-scale distributed data-intensive applications, which generally interact with large-sized files through append operations.

Problem A study of application workloads and the technological resources at Google revealed that component failures of inexpensive commodity hardware were common, which required the system to display constant monitoring, error detection, fault tolerance and automatic recovery. The study also revealed that file sizes were generally large (multi-GB files were common) and that the append operation was the most common way to append these files. These observations necessitated revision of design assumptions regarding I/O operations and block sizes. Specifically, the common case of append needed to be optimized. As the study also showed that a a majority of files were read sequentially, caching of data blocks in the client was not going to be useful. The system was also required to implement well-defined semantics for concurrent appends to the same file efficiently and to focus on achieving high sustained bandwidth over individual application latency. None of the existing distributed system designs achieved all of these goals.

Contribution The authors at Google designed and developed their own distributed system that handled the above issues. The GFS design has influenced the design of the Hadoop Distributed File System, which also uses the MapReduce framework for running its applications. While not being POSIX compliant, GFS organizes files hierarchically and provides a standard interface for file access, along with special functions such as snapshot and record append. The key architecture of GFS consists of a single master node, multiple chunkservers and multiple clients. Files in GFS are stored in chunks of size of 64 MB. The chunkserver stores each chunk as Linux file, identified by a chunk handle and stores a checksum for every contiguous 64KB region in a chunk to protect against data corruption. The master node stores metadata that stores the file and chunk namespace, operation log and the locations of chunks . All master metadata except the mapping from chunk handle to chunk locations is stored persistently at the master and its replicas. The polling mechanism between the master and the chunkservers called the HeartBeat is used for updating chunk locations on the master and also for controlling chunk placement and monitoring chunkserver status. The snapshot operations creates a copy of the file and directory structure using a copy-on-write mechanism, while the record append operation ensures an append-at-least once semantics. To minimize intervention from the centralized master, GFS uses leases to maintain a consistent write order and forwards data from the client to chunkservers in a way that exploits the network topology. This design separates the control and data flow. GFS replicates chunks and uses shadow masters to ensure high availability of file data. It also uses a lazy garbage collection mecahnism for cleaning deleted files and restore space. Stale chunks are identified using version numbers. Extensive diagnostic logging is performed at all nodes to isolate problems, debug and for analysing performance.

Evaluation The authors present two sets of evaluations – The first of this involved measuring the rate of read / write / record append operations on a small cluster developed specifically for the purpose of GFS evaluation. The read/write/append rate was measured with varying the number of clients issuing these requests. While the rate of read operations indicated a healthy usage of the network bandwidth, the write rate was only half of the theoretical limit. This was because the network stack did not work well with the GFS pipelining data while pushing data to chunk replicas. The record append rate was found to be limited by the bandwidth of the individual chunkservers. The next set of evaluations presented a comparison between usage statistics for smaller R&D clusters and larger production level clusters used at Google. In one such comparison, various aspects of GFS were compared, such as storage, metadata overhead, read and write rates, rate of operations sent to the master, and the recovery time. Another such comparison involved chunkserver workload profiling according to operation count and bytes transferred, comparison of number of appends vs writes, and the breakdown of requests received at the master. These comparisons validated the assumptions made by the GFS designers and also validated the GFS design objectives of high performance.

While the above evaluation covered the significant aspects of GFS, I believe that the following tests could have been added. First, there was no comparative analysis against other distributed systems such as NFS or AFS. Second, the key design decision of using chunk size as 64 MB was not evaluated – a performance analysis using different chunk sizes (both smaller and greater than 64MB) would have provided more insight on this decision.

Questions/Confusion 1. Semantics for handling chunk replica inconsistencies arising due to duplicates created by the record append operation.

Posted by: Shantanu Bhate | March 31, 2016 09:00 AM

1. Summary Google File System (GFS) was introduced to specifically cater to Google's data-intensive workload providing fault-tolerance on inexpensive commodity hardware. The authors discuss the file system interface extensions designed to support large cluster of terabytes of storage across multiple disks in various machines. Optimizations like constant monitoring, replicating large chunks, and fast and automatic recovery have been discussed for concurrent appends and sequential reads of large files while ensuring that the centralized master is not a bottleneck. The design was well tested with real world problems as well, proving its feasibility.

2. Problem Keeping in mind that components fail all the time, the authors tried to tackle the problem of not providing low latency in data access across networks but assurance of high bandwidth and crash recovery that never leads to loss of data. For most workloads, concurrent append writes to multi-GB files was most the common load and supporting multiple sequential readers was vital. Atomicity with minimal overhead was essential for multiple producers appending data and a consumer reading it simultaneously. The existing solutions of file system were not optimized for such workloads.

3. Contribution Keeping multiple replicas across multiple machines is the key design element for ensuring minimum data loss. With a 64MB chunk size, the client would translate the filename and byte offset into a chunk index and request master for the location and chunk handle of replicas. Master keeps track of chunks by periodic HeartBeat messages to all the chunkservers(CS) and only polls at startup or when CS joins. Operation log was the only persistent data maintained by GFS, checkpoint is in a compact B-tree that can be directly mapped into memory and optimizes namespace lookup. There are at least 3 replicas maintained and with one of them being Primary with a time-out lease. Shadow masters support only read-only access and are accessed when master is unavailable. Metadata state changes in master via logging, flushing to disks and finally applying. New checkpoint can be created without lag in incoming mutations. Having relaxed consistency implies maintaining version number of chunks and the data may be unavailable but never corrupt. Garbage collection kicks in for stale chunks via version numbering and on deletion of file the orphaned chunks are identified on CS via HeartBeat messages. Reclamation of resource upon deletion of a file is lazily carried out on master.

4. Evaluation The micro-benchmarks evaluate Read / Write / Append throughput via bandwidth utilization. Recovery time is also computed along with analysis and profiling of real Google workloads. In my opinion, separating file system control to master and data transfer amongst chunkservers and clients is a good approach to harness the most out of a distributed file system. Keeping the 3 major metadata types and no file data is a good usage of memory and also ensures no single point of failure. Chunk replica information is not persisted in master since the servers can modify them locally. File data is never cached, and snapshot is done on the same chunkserver that reduces the latency and network traffic a lot. It would have been good to evaluate these optimizations in a step-wise process to understand the performance gain better.

5. Question How are the inconsistent regions during record appends handled.

Posted by: Sejal chauhan | March 31, 2016 08:59 AM

1. Summary This paper describes Google File System which is a scalable distributed file system tailored for large data intensive workloads running on commodity machines. With goals like fault tolerance, scalability, etc. similar to previous distributed file systems, Its design is mainly driven by the workload characteristics and environment with focus on high aggregate throughput than low latency. It provides an API that is different than POSIX with operations like atomic record append and snapshot.

2. Problem There was a need to design a new distributed file system to meet Google’s growing data processing needs typically involving huge distributed workloads operating on a large number of multi-GB files. Some characteristics of their workload and operating environment include inexpensive commodity machines that fail often, files of huge size typically in GB, workloads with large streaming reads and small and rare random reads, workloads with large sequential appends to file, concurrent clients, etc.

3. Contributions The main contribution of the paper is in the overall design of a distributed file system that support huge data intensive workloads operating of huge amounts of data. i) While many people thought that single master design was not scalable and fault tolerant GFS design proves them wrong. Single master keeps the design simple. Though it might seem like a bottleneck, GFS master serve only metadata requests and the actual data requests go to the chunk servers. ii) Splitting a file into fixed chunks of 64MB and making the chunk as the unit of replication is another novel design choice. Having chunks of large size reduces the size of metadata at master and also reads and writes on the same chunk require only one initial request to the master. iii) As opposed to POSIX semantics which doesn’t provide any guarantee for concurrent operations within a file, GFS provides a clear definition of file state in presence of concurrent accesses for write and record append operations. Since concurrent appends are common among the workloads at Google, GFS provides a new atomic record append operation that guarantees that the data is written to the same offset at all the replicas. iv) Some other novel design choices include pipelined writes between replicas that fully utilizes each machine's bandwidth, checksums to protect against filesystem corruption, lazy deletion that runs in the background that can also deal with cases like accidental deletion, flat namespace and concurrent mutations to a directory, no caching at client.

4. Evaluation The evaluation presents some microbenchmarks and real world workloads from Google clusters. They evaluate aggregate throughputs of various operations like read, write and record append and compare it against the theoretical limit. They also show the master load which proves that a single master is not a bottleneck in their design. They also evaluated recovery times during chunkserver failures. They also present the real world workload characteristics in two GFS clusters. The evaluation didn’t show the downtime that can be caused due to master failure.

Posted by: Aishwarya Ganesan | March 31, 2016 08:59 AM

1. Summary The paper presents the design and implementation of the Google File system which is a scalable distributed system for large distributed data-intensive applications, providing fault tolerance running on inexpensive commodity hardware and delivers high aggregate performance(throughput) to a large number of clients. The design was driven analyzing the application workloads at Google and thus traditional choices in design were reexamined to explore suitable design points.

2. Problem The application workloads and technological environment at Google is different from existing compute and storage file systems. Component failures are the norm rather than exception and thus needs constant monitoring,error detection, fault tolerance and automatic recovery. Also, the files are huge by traditional standards, with Multi-GB files being the common case. Another aspect of the environment is that most files are mutated by appending new data rather than overwriting existing data, which makes random-writes practically non-existent. Reads performed will be sequential. They have also addressed the problem of atomic append operation for multiple clients to append concurrently without using synchronization constructs.

3. Contributions The design of GFS cluster contains a single master, multiple chunk servers and is accessed by multiple clients. Files are divided into fixed sized chunks which are replicated in GFS for reliability. Chunkservers store chunks,which are identified by global unique chunk handle, on local disks as Linux files. The master maintains all the file system metadata - namespace, access control information, mapping from files to chunks and current locations of chunks and controls system wide activities like chunk lease management, garbage collection and chunk migration. The client interaction with master happens only to get metadata, and all data-bearing communication goes directly to chunkserver. Having a single master simplifies the design and makes chunk placement and replication decisions using global knowledge. Chunk size is chosen to be quite large leading to reduction in client-master interaction , reduce network overhead by keeping persistent TCP connection, and reduction of metadata size stored at master node(it can be stored in memory even for very large clusters). Mutations are logged persistently into operational log at master to ensure reliability and recoverability after master crash. Another useful design point is the separation of data flow and control flow in GFS to use the network efficiently. Snapshot operation is supported to make a copy of a file/directory instantly. The master implements chunk level replica placement policy to maximize data reliability and availability. Garbage collection is performed lazily at chunk and file levels to amortize its cost. The design of master and chunkserver ensures fast recovery to restore their state by master replication and chunk replication. 4. Evaluation To illustrate the performance the authors rn micro-benchmarks to analyze the read, write and append performance. They have made compared GFS performance with the theoretical maximum performance for these operations calculated using cluster configuration. For real world clusters , the authors point out that metadata stored in master is very small and can easily fit in the master’s memory. They also present experimental results illustrating that master is not the bottle-neck in the system with the load being around 300-500 ops at master. Thus they show that scaling will not be a problem with these designs. Results for quick recovery are also presented based on the priorities (2-20min for different clusters 600GB). The read rates are are very high and about 70% of the theoretical value while the writes are slightly slower than expected by authors. IT would have been interesting if these experiments would have been compared to existing file/storage systems for these applications.

5. Confusion Could you explain about the state of the files after the atomic append operation? How is inconsistent file state repaired? Can replicas have slightly different contents at some stage?

Posted by: Anshul Purohit | March 31, 2016 08:54 AM

Sumary: The authors in this paper discuss the design and the implementation techniques used in GFS, a scalable distributed file system for large data intensive applications. GFS provides fault tolerance and high aggregate performance. In the design authors have completely given up the POSIX interface and have kept it simple enough to work optimally on their anticipated workload. This file system is successfully deployed within Google as a storage platform for processing large datasets.

Problem: The major problem being solved by the authors in the paper is to provide fault tolerance and high aggregate performance along with scalability in the distributed file system. They target GFS for workload with huge files which are mostly appended or read sequentially.

Contribution: The paper revisits the traditional file system assumptions in the scenario of anticipated workload of google and propose some radical changes for distributed file systems. First is with respect to fault tolerance. GFS with its heart beat messages proposes constant monitoring of its cluster and chunkserver by a master server. It uses checksums for error recovery of failed components and driver issues. It also uses automatic recovery by shadowing master, replication of state and chunk across different chunkservers. Second is with performance, based on an observation of file size distribution and access pattern, GFS uses a large chunk size, decouples data and control transfer between chunk servers and master. For concurrent access it supports append at least once mechanism along with chunk leases and explicit serialized mutation orders.

Evaluation: The authors evaluated the performance of GFS in the paper with few micro benchmark experiments for reads, writes and record appends. They find that efficiency of read drops as the number of readers increase as there could be multiple readers reading from the same chunk server. Writes were slower than expected but it doesn't increase any significant aggregate write bandwidth. They also did an examination on a real world cluster used within google, read rates were much higher than the write rates. Overall it is a great paper with many techniques working together and I think since the google file system is suited to google workloads the evaluation is appropriate.

Confusion: Would like to have a discussion on tradeoffs incurred in the design and how the file security as provided by POSIX interface is implemented here ?

Posted by: Ankur Srivastava | March 31, 2016 08:43 AM

1.Summary: This paper is about the design and implementation of Google File System(GFS), a scalable distributed file system for large distributed data-intensive applications. The main features of the file system are fault tolerance and high aggregate performance.

2.Problem: Traditional distributed file systems lack the following for data-intensive applications: 1) Inexpensive commodity components accessed by client machines are prone to failures and do not recover easily. As a result, fault tolerance must be integral to the system 2) Multi GB files are mostly common and must be optimized for, than small files. 3) There are large appends when compared to random writes and must be made efficient. 4) Co-designing file system API and the applications makes the system flexible. GFS is built on the above assumptions.

3.Contributions: GFS employs variety of simple techniques as part of the system. Following are some of the key contributions: 1) GFS cluster with single master and multiple chunkservers of fixed size chunks with globally unique 64 bit chunk handle assigned by master during creation. Master maintains all the metadata including file namesapce, mappings from files to chunk, chunk location, access permissions etc. Client gets chunk information from masters and contacts the chunk servers for reads and writes. 2) Error detection using 'heart beat' messages from chunk servers to collect state, give instructions. Fault tolerance is provided by replicating the chunks across the chunk servers and having a 'shadow' master that shares the master's metadata and logging information. 3) Having large chunk size of 64MB optimizes for workloads that read and write large files sequentially, reduce network overhead by persistent TCP connection. 4) Concepts such as snapshot to create copy of files/directory tree instantaneously and atomic record appends, where appending 'atomically atleast once' semantics is used. This suits the need where multiple clients append to the same file concurrently. Duplicates/inconsistencies are handled through checksumming. 5) Lazy Garbage collection at file and chunk levels, making it simpler. During file deletion, instead of reclaiming physical resources, it is changed to a hidden name and chunk entries are removed from metadata. Orphaned chunks are then found across the chunk servers and later reclaimed.

4.Evaluations: The authors have based the evaluation on micro-benchmarks and real world clusters. In Microbenchmarks, the performance of reads, writes and record appends for N number of clients are measured and compared against the theoretical limit. Observed read rate is 80% of the ideal limit and drops as number of clients increases, aggregate write rates about half of the limit and record append rates are less due to network congestions. Further, two real world clusters are taken, of which one contains many smaller reads and other with large sequential reads. Here, they evaluate the load on master and find that it is not a bottleneck ,with the master handling thousands of file accesses/sec due to efficient searches. Chunk server failure handling has been evaluated by killing one of the chunkservers with 15000 chunks and 600 GB of data, in which all of the chunks were recovered in 23.2 minutes. The authors have measured the system well and provided performance metrics in the form of tables and graphs, but have not really drawn any conclusion from them or provided their thoughts as to why the results are so. The efficiency of GFS could have been shown better by comparing against the same workloads on a different distributed file system.

5.Confusion: How does lazy space allocation solve internal fragmentation problem?

Posted by: Sharanya Devaraj | March 31, 2016 08:40 AM

Summary This paper describes the design and implementation of the Google File System (GFS), which is a scalable distributed file system designed by Google to especially support their large distributed data-intensive applications.

Problem Large-scale data processing needs at Google led the authors to revisit some of the design goals of the file system. Specifically, the requirement was to create a fault-tolerant & scalable file system that could run on commodity hardware and provide concurrent access to hundreds of clients to terabytes of data stored across a thousand of machines. And thus, driven by the unique requirement of their application workloads, the authors proposed GFS.

Evaluation The authors have presented their evaluation of GFS using micro-benchmarks, as well as, using real-world workloads. A small GFS cluster consisting of one master, two master replicas, 16 chunkservers and 16 clients was used to micro-benchmark the performance of GFS. For their micro-benchmarks, the authors have compared the performance of read, write and record append operations of GFS against the theoretical possible limit as established by network bandwidth. The read operation rate was able to achieve 75% to 80% of the theoretical limit, while the write operation was only about half of the theoretical rate. They have attributed slow write rates to their network stack, which does not interact very well with the pipelining scheme used to push data to chunk replicas. The authors also examine the performance of GFS on two kinds of real-world clusters- one that is used for research and development and the other that is used for production at Google. For both the types of clusters, they have demonstrated that GFS is able to sustain high read, write and record append operation throughput even in the presence of chunkserver and disk failures.

Given that GFS is highly tailored for use by large-scale data-intensive applications run within Google itself, I think it justifies for the authors to have done their evaluation primarily on the workloads seen by their own Google clusters. However, I feel that the authors could have elaborated more and justified some of their design choices through their evaluations. For example, what is an appropriate chunk size? Is 64 MB good enough? How does the performance vary with different chunk sizes? What is a good replication factor? How does increasing replication factor affect the network congestion and performance of the overall file system? I think it would be interesting to see answers to these questions.

Confusion How is access control and permissions implemented in GFS?

Posted by: Saket Saurabh | March 31, 2016 08:36 AM